In this article we will quickly go through the steps required to make your excel calculation mode defaulted to ‘Manual’. When working on websheets, it is advisable to keep the calculation mode to Manual to prevent repeated recalculation of formulas for any cell change when the calculation mode is Automatic. The default calculation mode could be set to Manual by following the below steps: Step 1: ...

In this article we will quickly go through the steps required to make your excel calculation mode defaulted to ‘Manual’. When working on websheets, it is advisable to keep the calculation mode to Manual to prevent repeated recalculation of formulas for any cell change when the calculation mode is Automatic.

The default calculation mode could be set to Manual by following the below steps:

Step 1: Close all excel workbooks and open a new workbook.

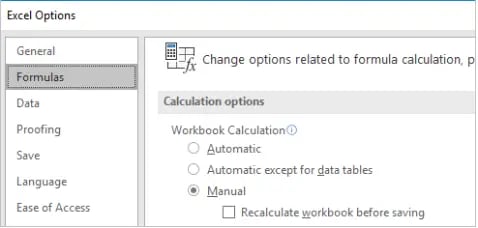

Step 2: Change the Excel calculation mode to Manual (File - Options - Formulas)

Step 3: Save the workbook in C:\Users\<User Profile Name>\AppData\Roaming\Microsoft\Excel\XLSTART

To revert the settings, simply delete the file from the folder.

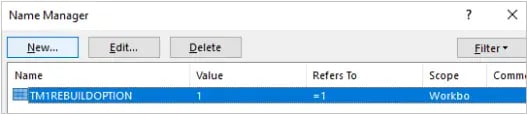

On a side note, the web sheets that are created by slicing from cube viewer, TM1RebuildOption - a workbook level named variable - can be set to 0 before uploading them to Applications folder to prevent rebuilding all the worksheets which causes a recalculation to happen on each sheet when opening. This action is not needed for sliced reports and is more so required for Active Forms under specific circumstances. This is set to 1 by default when slicing.

Alternatively, in order to automate this, the TM1RebuildDefault configuration in tm1p.ini file could be updated to F so the TM1RebuildOption is 0 for all websheets created by slicing.

No data showing in Transaction log and logging is ON for the cube

- Ensure that GMT/UTC (Greenwich Mean Time / Coordinated Universal Time) is factored in when selecting Start Time and End Time. TM1 uses GMT/UTC time for recording log files. The default start date and time is 00:01:00 GMT on the date the query is run.

- Ensure the element name strictly follows TM1 Object Naming Conventions. It has been observed that element names containing the slash (/) character did not return any result in transaction log query.

- In case data loaded/entered is very recent, ensure the save data has been taken place. In TM1, even when LOGGING property for a cube is set to YES, the data entered/loaded will be initially stored in the text file tm1s.log (the data in this file is not readable while server is in run state or viewing Transaction Log on TM1 Architect). The data will remain in tm1s.log file until save data operation takes place which then saves it from tm1s.log to Tm1syyyymmddhhmmss.log file.

- Ensure the log files (denoted by Tm1syyyymmddhhmmss.log in log file directory) where the logs are saved for that cube, are not deleted.

- Manually open the log files (indicated by format: Tm1syyyymmddhhmmss.log) in the Log directory of the TM1 server and verify if the transactions exist.

- Ensure the logging property is not disabled while loading the data in the cube via TI – this will not log any data updates via the TI, to the disk.

- Ensure logging property is enabled back in Epilog tab once it is disabled in the Prolog – if not enabled, this will not only impact the logging of current data loaded but also the data entered or loaded via other TIs.

- While updating the metadata, ensure DimensionDeleteAllElements or Recreate if using Maps, is not being used – especially if there are multiple TIs updating the metadata of a dimension. If the new source do not contain the existing element that holds the historical data (for which the log file is highly unlikely to exist) and one of the above methods are used, TM1 not only deletes the element but also the data loss will not be logged in TM1 transaction log thereby making it very difficult to retrieve the data back.

Try out our TM1 $99 training

Join our TM1 training session from the comfort of your home

we go the extra mile so you can go the distance|

Got a question? Shoot!

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.

Leave a comment