IBM TM1 models can get messy fast, especially when you use alternate hierarchies and elements show up in multiple places. That's where the TM1DISTINCT MDX function quietly saves the day. Instead of blindly stripping out anything that "looks" duplicated, it understands TM1's hierarchies and only removes true duplicates – the exact same member in the exact same context The Problem: Why Regular ...

- Mastering TM1DISTINCT: The Smart Way to Clean Up Your MDX Subsets

- The Ultimate IBM Planning Analytics (TM1) Training Roadmap for Beginners

- Why CIOs and CFOs Are Moving TM1 On-Prem to Planning Analytics SaaS on AWS

- Modernising TM1: Why Cloud Migration Alone Doesn’t Solve the Problem

- Smarter Cube Views in IBM Planning Analytics Workspace 3.1.3

- What's new in Cognos Analytics 12.1.x

IBM TM1 models can get messy fast, especially when you use alternate hierarchies and elements show up in multiple places. That's where the TM1DISTINCT MDX function quietly saves the day. Instead of blindly stripping out anything that "looks" duplicated, it understands TM1's hierarchies and only removes true duplicates – the exact same member in the exact same context

The Problem: Why Regular DISTINCT Isn't Enough

Imagine you're building a dynamic subset of Products. You pull all products under All Products, then union it with a special "Focus Products" consolidation. Now some products appear twice in the raw result. With the classic DISTINCT, TM1 might collapse these duplicates in a way that hides the structure you actually care about.

This is especially problematic when working with:

-

Alternate hierarchies that place elements in multiple logical positions

-

Union operations that naturally generate overlapping sets

-

Complex dimension structures where the same leaf element has different parents

-

Dynamic subsets that combine multiple source sets.

TM1DISTINCT is smarter: it keeps the element where it appears in different meaningful places, and only cleans up genuine duplication caused by unions or repeated logic.

Understanding TM1DISTINCT vs DISTINCT

The key difference lies in context awareness:

-

DISTINCT: Removes any duplicate entries that match another entry based on the member name. This can accidentally collapse elements that appear legitimately in different branches of the hierarchy.

-

TM1DISTINCT: Removes duplicates only when they are truly identical – same element, same hierarchy path, same context. It respects the multi-hierarchical nature of TM1.

While the existing DISTINCT function removes duplicate elements from a set, the new TM1DISTINCT function removes duplicate members only if they are truly identical, including their parent context. This distinction is important because a single element can appear as multiple members in a hierarchy if the element has different parents.

This distinction becomes critical when your dimension design intentionally places elements in multiple locations for different analytical views.

Practical Examples

Example 1: Basic Leaf-Level Filtering

TM1DISTINCT(

TM1FILTERBYLEVEL(

{Descendants([Product].[All Products])},

0

)

)

Here, you get a clean leaf-level list of products, free of accidental duplication, but still faithful to how the hierarchy is built. The function returns all leaf-level descendants while removing any technical duplicates that might arise from the query logic.

Example 2: Combining Multiple Sets (Union Scenario)

TM1DISTINCT(

{ TM1SubsetAll([Customer]) + [Customer].[Key Accounts] }

)

You end up with each real customer only once, even though "Key Accounts" is already part of the full customer list. This is where TM1DISTINCT truly shines – it preserves your intentional hierarchy structure while cleaning up the noise.

Example 3: Alternate Hierarchy Preservation

TM1DISTINCT(

TM1FILTERBYLEVEL(

{Descendants([Cost Center].[Total Company])},

0

)

)

Leaf-level cost centers under Total Company are returned, and any technical duplicates from unions or repeated selection logic are cleaned up safely. The alternate hierarchy placements remain intact.

Real-World Impact

Consider a retail company with a Product dimension that has both:

-

A Standard Hierarchy: All Products → Category → Subcategory → SKU

-

An Alternate Hierarchy: All Products → Channel → Brand → SKU

The same SKU (say, "Blue Shirt Medium") legitimately appears under both "Subcategory" and "Brand." Using DISTINCT here might collapse one of these occurrences, breaking reporting by channel. Using TM1DISTINCT keeps both occurrences because they represent different analytical contexts.

Best Practices

-

Use TM1DISTINCT when building dynamic subsets that combine multiple sets or work with alternate hierarchies

-

Avoid it only for simple, single-hierarchy subsets where standard DISTINCT would work fine

-

Combine with TM1FILTERBYLEVEL to ensure clean, context-aware filtering

-

Test with your actual dimension structure to verify the results match business expectations.

Conclusion

TM1DISTINCT represents a maturation of MDX handling in Planning Analytics, acknowledging that TM1's rich hierarchy support requires intelligent de-duplication. By using it in your dynamic subsets, you ensure clean data without sacrificing the intentional structure your dimensions are built upon. Your business users – and your data model – will thank you for it.

References

-

IBM Planning Analytics Documentation. (2024). TM1DISTINCT( <set> ). IBM. https://www.ibm.com/docs/en/planning-analytics/3.1.0?topic=tsmf-tm1distinct-set

-

IBM Planning Analytics. (2024). TM1 specific MDX functions. IBM. https://www.ibm.com/docs/en/planning-analytics/2.0.0?topic=mfs-tm1-specific-mdx-functions

IBM Planning Analytics (TM1) is a powerful enterprise planning and analytics platform used for budgeting, forecasting, and reporting. This premium roadmap is designed to take you from beginner to job-ready TM1 developer with practical skills, real-world use cases, and interview preparation.

.png?width=604&height=340&name=roadmap%20(1).png)

Phase 1: Fundamentals (Week 1–2)

-

Understand OLAP concepts (Dimensions, Cubes, Measures)

-

Explore TM1 Architecture (Server, PAW, TM1 Web)

-

Install and navigate Planning Analytics Workspace (PAW)

-

Understand basic cube and dimension structures

Phase 2: Core TM1 Development (Week 3–5)

-

Create Dimensions, Hierarchies, and Attributes

-

Build Cubes and load sample data

-

Understand Rules (SKIPCHECK, FEEDERS)

-

Learn calculation logic and aggregation

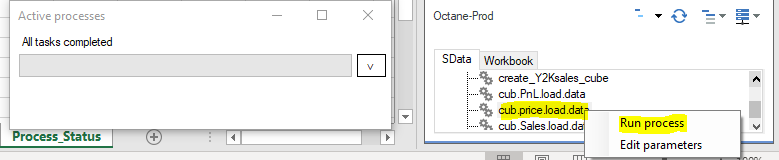

Phase 3: TurboIntegrator (TI) Processes (Week 6–7)

-

Learn TI structure: Prolog, Metadata, Data, Epilog

-

Load data from CSV/DB into cubes

-

Use ASCIIOutput for debugging

-

Handle errors and optimize performance

Phase 4: TM1 MDX (Week 8–9)

-

Create dynamic subsets using MDX, SubsetCreateByMDX, SubsetMDXSet

-

Use TM1 functions: TM1SUBSETALL, TM1FILTERBYLEVEL

-

Understand DISTINCT vs TM1DISTINCT, Filtering data by value

-

Work with alternate hierarchies

Phase 5: Advanced Topics (Week 10–12)

-

Security: Groups, Users, Cube Security

-

Chores and Scheduling

-

Performance tuning and feeders optimization

-

Sandboxes and versioning

Real-Time Project (Must Do)

Build a Sales Planning Model:

-

Dimensions: Time, Product, Region

-

Create Cube: Sales Planning

-

Load data using TI

-

Apply rules for forecasting

-

Build reports in PAW

Best Practices

-

Always use feeders efficiently

-

Keep dimensions optimized and clean

-

Use TopCount, Filtering data by value in subsets

-

Debug using logs and ASCIIOutput

-

Use meaningful naming conventions

Interview Preparation Tips

-

Be ready to explain Cube, Dimension, Rule, and TI process

-

Know difference between feeders and SKIPCHECK

-

Practice MDX queries

-

Prepare real project explanation

Useful Resources

Official IBM Documentation: https://www.ibm.com/docs/en/planning-analytics

IBM Product Page: https://www.ibm.com/products/planning-analytics

Octane Page: https://blog.octanesolutions.com.au

By following this roadmap and practicing consistently, you can become a TM1 developer within 3 months. Focus on hands-on implementation and real-world projects.

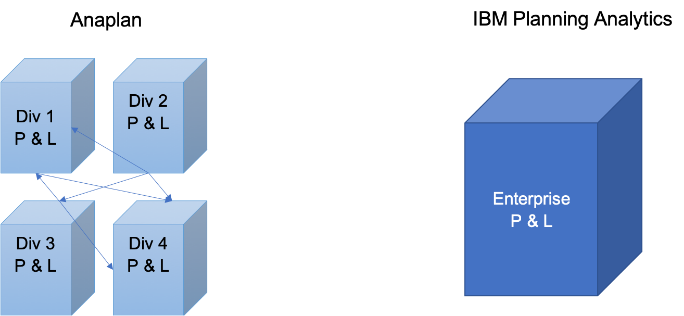

TM1 v11 on-prem has served organizations well for years. It’s fast, flexible, and trusted. But the expectations around enterprise platforms have changed. Today, leadership teams want lower risk, predictable costs, and systems that evolve without constant reinvestment.

That’s where Planning Analytics SaaS on AWS fits in—not as a new planning engine, but as a better way to run one.

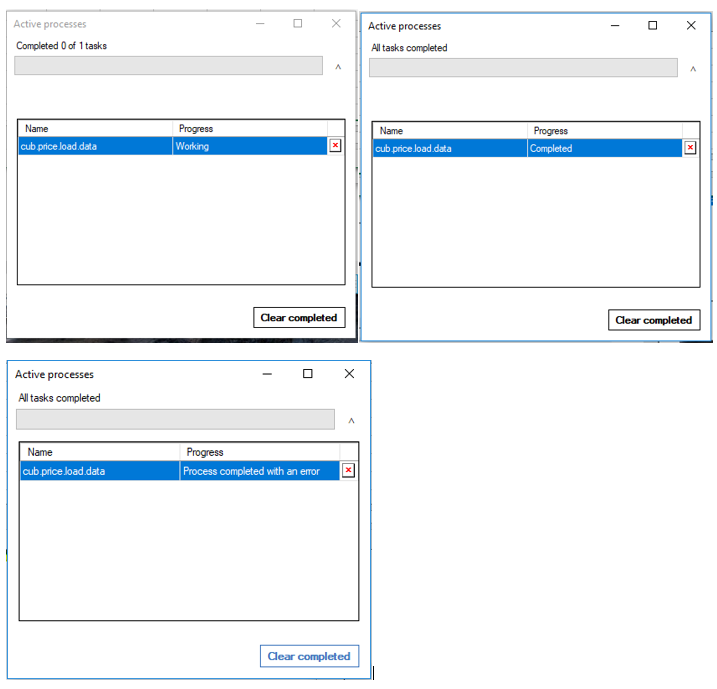

You Stop Running Infrastructure

With on-prem TM1, you’re not just running a planning system—you’re running servers, storage, backups, patches, and disaster recovery. Even when nothing breaks, there’s ongoing effort and risk.

Planning Analytics SaaS changes that. The platform is fully managed by IBM on Amazon Web Services. Availability, backups, and resilience are built in. IT teams spend less time maintaining platforms and more time supporting the business.

Costs Become Predictable

On-prem costs rarely end with licenses. Hardware refreshes, DR environments, security fixes, and upgrade projects add up quietly over time.

SaaS replaces that with a subscription model. No capital spend, fewer surprises, and much clearer long-term cost visibility—something finance teams appreciate immediately.

Lower Risk, Stronger Security

In an on-prem setup, availability and security depend heavily on how much time and money the organisation can invest.

In Planning Analytics SaaS, resilience and security are standard. High availability, encryption, and regular security updates are part of the service, not optional extras. This reduces operational risk and simplifies audits.

No More Upgrade Projects

Upgrading on-prem TM1 is disruptive, which is why many systems stay untouched for years.

With SaaS, updates just happen. New features arrive without downtime or upgrade programs. The platform stays current without forcing the business into large, risky change initiatives.

Performance Scales When It Matters

Planning systems are pushed hardest during budgets and forecasts, but on-prem hardware is fixed year-round.

SaaS handles peaks without permanent over-investment. Performance stays consistent during critical cycles, without IT having to guess future capacity needs.

Faster Time-to-Value

Standing up new environments or supporting business growth takes time when infrastructure is involved.

With SaaS, environments are available faster, projects move more quickly, and new requirements can be supported without long lead times. This improves agility across finance and operations.

Cleaner, Modern Integration

Traditional file-based integrations are fragile and slow.

Planning Analytics SaaS supports secure, API-based integration, making it easier to connect planning with ERP systems and cloud data platforms. This aligns better with modern enterprise data strategies.

Better Governance by Design

SaaS comes with boundaries—no server access, no unsupported scripts, no hidden workarounds.

While that requires adjustment, it results in cleaner architectures, fewer production issues, and stronger governance. Over time, most organisations see this as a benefit, not a limitation.

The Bottom Line

Moving from TM1 on-prem to Planning Analytics SaaS on AWS isn’t about changing how you plan. It’s about reducing risk, simplifying operations, and making costs and performance more predictable.

For CIOs, it means less infrastructure and lower operational exposure.

For CFOs, it means clearer costs, better scalability, and fewer surprises.

In short, it’s a more modern way to run a planning platform—without losing what made TM1 valuable in the first place.

IBM Planning Analytics (TM1) remains one of the most powerful planning and modelling engines used by Finance teams worldwide. Yet many organisations eventually experience frustration with their TM1 environments — slow performance, painful upgrades, rising support costs, and the quiet return of Excel.

Contrary to popular belief, these challenges are rarely caused by TM1 itself.

They are symptoms of a modernisation gap.

The Hidden Drift Problem in TM1 Environments

TM1 models often start clean, efficient, and purpose-built. Over time, however, incremental changes accumulate:

-

New dimensions added without structural discipline

-

Rules layered onto legacy logic

-

TI processes expanded beyond their original design

-

Reporting logic intertwined with source data

-

Upgrade cycles deferred

-

Risk and compliance requirements

-

Upgrade tolerance

-

Internal IT capabilities

-

Cost predictability objectives

-

Metadata-driven logic instead of hard-coded processes

-

Modular cube design separating input, calculation, and reporting

-

Decoupled reporting layers

-

Performance-first feeder strategies

-

Cloud-aware security models

-

Structured change management practices

-

Issues detected late

-

Knowledge concentrated with individuals

-

Upgrades treated as disruptive events

-

Costs becoming unpredictable

What emerges is not a broken system — but a fragile one.

Performance declines. Change cycles slow. Complexity rises.

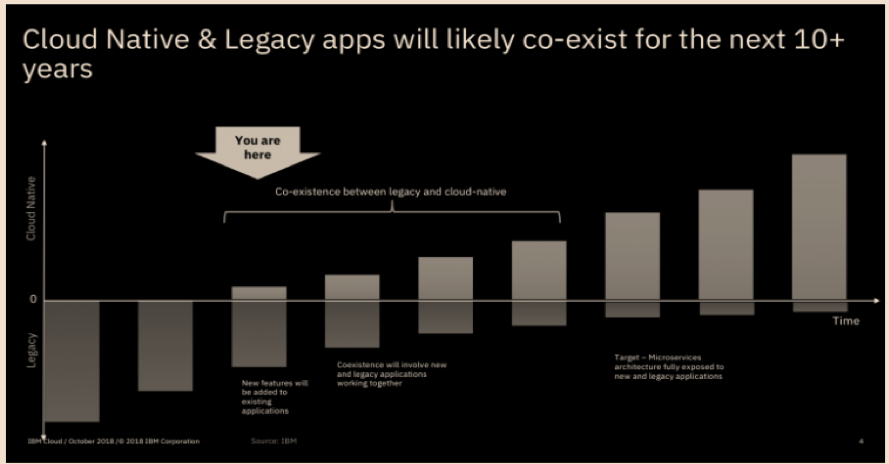

The Common (But Incomplete) Response: “Move to Cloud”

When issues surface, organisations frequently default to infrastructure decisions:

✔ Move from on-premise to cloud

✔ Adopt SaaS

✔ Change hosting providers

While these shifts reduce infrastructure management overhead, they do not automatically modernise the model.

A poorly structured TM1 architecture behaves the same way regardless of where it is hosted.

Better infrastructure cannot compensate for design inefficiencies.

True TM1 Modernisation Requires Three Pillars

Sustainable TM1 environments align three interdependent areas.

1. Infrastructure Modernisation

Infrastructure choices should reflect:

Cloud platforms reduce maintenance effort — but they are only the foundation.

2. Architecture Modernisation (The Critical Lever)

Architecture modernisation is where the largest gains are realised.

Modern TM1 models typically prioritise:

Without architectural evolution, cloud migration simply relocates existing constraints.

3. Support Model Modernisation

Traditional break-fix support models introduce systemic risk:

Modern support approaches focus on:

✔ Proactive monitoring

✔ SLA-driven response models

✔ Continuous optimisation

✔ Upgrade lifecycle management

✔ Knowledge transfer

This operating philosophy underpins Octane Blue, our proactive TM1 managed services model.

Why This Matters for Finance Leaders

Modernised TM1 environments typically deliver:

✅ Faster budgeting and forecasting cycles

✅ Lower data errors

✅ Safer upgrade paths

✅ Reduced operational friction

✅ More predictable support costs

Most importantly, Finance teams regain time, stability, and confidence.

Final Perspective

Cloud migration is valuable — but it is not modernisation by itself.

Real TM1 modernisation redesigns how the model scales, performs, and evolves with the business.

📘 Download the TM1 Modernisation Roadmap (PDF)

📊 Request a Free Upgrade & Risk Estimate

Introduction

IBM Planning Analytics Workspace 3.1.3 has made a noticeable difference to how I work with cube views every day. Instead of dragging views around and hoping I do not overwrite something important, I now use the built-in view selector to switch between different saved views in seconds.

With Undo and Redo available directly in the cube view, it feels safe to try new layouts, pivots, and filters because I can always step back if the result is not what I expected. Together, these changes reduce friction in my analysis, keep my report layouts intact, and help me move much faster from “idea” to “answer".

Part 1: From Drag-and-Drop to Smart View Selection

Before 3.1.3

Analysts had to drag views from the left panel onto the grid, risking accidental overwrites and losing custom filters and formatting with each swap. Finding the right view in a cluttered list of auto-generated names wasted time. Or they can click on three dots and click on ‘Add View’

Now in 3.1.3

The new View selector dropdown lets you switch views instantly while preserving layout, filters, and formatting. Search by keyword to find relevant views fast.

Key benefits:

-

Layout stays intact when switching views

-

Fast scenario comparisons (Actuals → Budget → Forecast)

-

Searchable view list for large libraries

Part 2: Undo and Redo for Cube Views

Before 3.1.3

Changes to cube explorations were permanent. Pivoting dimensions, reordering members, or adjusting filters felt risky, so modelers hesitated to experiment and often retreated to Architect.

Now in 3.1.3

Native Undo/Redo buttons on the toolbar let you experiment safely. Move dimensions, adjust filters, and reorder members with confidence—revert instantly if needed.

What you can undo/redo:

-

Move dimensions between context, rows, and columns

-

Expand/collapse hierarchies

-

Apply/remove filters

-

Reorder dimensions

Quick Wins

-

For report builders: Use the view selector to build flexible reports; users switch views at runtime.

-

For modelers: Experiment freely with Undo/Redo; refine layouts through rapid iteration.

Conclusion

-

Planning Analytics Workspace 3.1.3 simplifies cube view workflows with a smart view selector and Undo/Redo. These features accelerate analysis, reduce friction, and help teams transition confidently from legacy tools to modern Workspace.

-

Enabling the cube viewer switcher needs to be done for each view separately, which is a drawback. It would be good to have a universal configuration for enabling it for all the views.

References

[1] IBM. What's new in modelling – 3.1.3. IBM Documentation, 2025. https://www.ibm.com/docs/en/planning-analytics/3.1.0?topic=2025-whats-new-in-modeling-313

[2] IBM. What's new in Planning Analytics Workspace 3.1.x? IBM Documentation, 2025. https://www.ibm.com/docs/en/planning-analytics/3.1.0?topic=workspace-whats-new-in-planning-analytics

Dashboards:

Distinction between Display and Use value in dashboards:

You can now define Display and Use values in data modules.

The Display values are the values that you can see in a dashboard UI; the Use values are primarily for filtering logic.

Previously, defining the Display and Use values was possible only in FM packages. This feature brings the same capability to data modules and enhances consistency across dashboards and reporting. You can interact with readable values while filters apply precise underlying identifiers. For example, you can select a Customer ID value in the dashboard UI and apply a filter that is based on the Customer Name value.

Manage filter size and filter area visibility:

You can now resize filter columns and hide filter areas to improve the arrangement and visibility of these elements in dashboards.

For more information on resizing filter columns in the All tabs and This tab filter areas, see Resizing filters.

For more information on hiding and reshowing the filter areas, see Hiding and showing filter areas.

Option for users to export visualisation data to a CSV file:

You can now allow your users to export visualisation data to a .csv file.

To enable this feature, open a dashboard or a report that contains a visualisation, go to Properties > Advanced, and turn on the Allow users access to data option.

When this option is active, users can open the data tray and download the .csv file from the Visualisation data tab. Enabling this feature also adds an Export to CSV button and Export to CSV icon to the toolbar. The button is visible to the users and to the editors. If you turn off this feature, the button disappears.

Responsive dashboard layout:

The 12.1.1 release introduces a responsive layout feature for dashboards.

This feature enhances the authoring experience and usability across different devices by optimising the dashboard layout for various screen sizes, including mobile devices. You can also use it for grouping the content and organising visualisations.

To use a responsive layout, go to the Responsive tab when you create a new dashboard and select one of the available templates, as seen in the following image:

The responsive dashboard layout feature comes with the following key capabilities:

- Layout selection:

You can now choose between responsive and non-responsive layouts when you create a new dashboard.

- Adaptive widgets:

If you change the position of a panel or resize the dashboard window, the widget automatically adapts its placement and alignment.

- Intuitive resizing and swapping:

Smart alignment algorithms facilitate smooth layout transitions, while an intuitive interface makes the authoring experience smoother and more efficient.

- Drop zones for precise widget placement:

Each layout cell supports five drop zones: top, right, bottom, left, and center. You can use these zones for more control over widget placement.

- Cell deletion:

Dashboards now differentiate between empty and populated cells for accurate deletion.

- Data population:

The feature mirrors data population from the non-responsive layouts, supports drag-and-drop function, and slot item selection. If you use the copy and paste or click-add-to functions, the feature uses a smart placement logic to make sure that it adds the content to empty cells. It can also split the data between existing cells.

- Window resizing:

You can now dynamically resize a dashboard and its layout automatically adapts to the new screen size. It includes transition to a single-column or two-column layouts on smaller screens for enhanced readability.

- Printing to PDF files:

You can print the dashboard to a .pdf file in View mode and in the New Page mode.

- Nested dashboard widgets:

You can use the nested dashboard widgets as standard widgets or as containers for grouping and organising the content.

To successfully implement the responsive layout, you must make sure that the dashboard uses manifest version 12.1.1 or later and confirm widget boundaries by employing the layout grid. However, if the widgets do not render correctly, check the layout specification and verify the feature support.

Secure dashboard consumption with execute and traverse permissions:

Users can now consume dashboards with execute and traverse permissions granted to presented data, no read permission is required.

In the previous releases of IBM® Cognos® Analytics, the read permission was required for dashboards consumption. This might cause a sensitive data compromise because dashboard consumers could edit and copy such data.

Important: To strengthen the protection of data that you want to be consumed by other users, modify these users' permissions from Read to Execute and Traverse before you migrate to Cognos Analytics 12.1.1.

However, the execute and traverse permissions put some restrictions on actions that can be taken by a dashboard consumer. Therefore, the consumer cannot perform the following actions:

-

Drill up and down

-

Export

-

Narrative insights

-

Navigate

-

Open dashboards

-

Paste copied widgets into another dashboard.

-

Pin

-

Save

-

Save as a story

-

See the full data set in the data tray.

-

Share

-

Switch to Edit mode.

Personalised dashboard views:

The 12.1.1 release comes with a new feature for simplified customisation of complex dashboard designs.

A dashboard view is a feature that references a base dashboard, which contains your individual filters and settings. It supports the following customisation features:

-

Filters

-

Brushing, excluding local filters on individual visualisations

-

Bookmarks, including the ability to set the currently selected tab

You can create dashboard views only from an open dashboard and from within the dashboard studio, and only against saved dashboards. If the open dashboard is saved, a Save as dashboard view option appears in the save menu:

This operation works as a standard Save as operation. When the operation is complete, the original dashboard is still displayed. To access the new dashboard view, you must open it manually from the content navigation panel.

The dashboard views have a different icon from regular dashboards. It includes an eye overlay, which is similar to a report views icon:

You can customise a dashboard view by changing the brushing, filter, or bookmarks, and then saving the view. However, the dashboard view is essentially in a Consume mode, and you can't switch to the authoring mode. It also means that you can't access the metadata tree of the dashboard view or add extra filter controls to the filter dock. If you want your users to apply filters in a metadata column, you must first add that column to the base dashboard, even if you don't initially select any filter values.

Any updates that you make to a base dashboard automatically appear in the dashboard view, except for the custom options that you define in the dashboard view itself. You can see the changes the next time that you open the dashboard view. For example, if you delete a visualisation from the main dashboard, it no longer appears in the dashboard view.

The Save as dashboard view operation also creates a non-editable bookmark in the dashboard view. This bookmark includes the state of filters and brushing that you applied in the dashboard at the time when the dashboard view was created or last saved. When you open the dashboard view and don't select any other bookmark, this bookmark is automatically selected.

The dashboard views not only consume bookmarks from the base dashboards, but they also can have their own bookmarks. You can create them in the same way as in standard dashboards. The Cognos® Analytics UI differentiates between Shared bookmarks, so all bookmarks from the base dashboards, and My bookmarks, which are bookmarks from the dashboard view.

If you delete the base dashboard, you can't open the dashboard view, and its entry is disabled in the content navigation. All attempts to access that dashboard view by entering its URL address directly into a browser result in an error message. Also, the Source dashboard property appears as Unavailable, for example:

Reporting:

Enhanced clarity of reporting templates view:

Release 12.1.1 enhances the user experience of navigating through report templates.

When you open the Create a report page, it shows only templates that match the Report filter value. This change hides all Active Reports templates by default and makes only the Report templates visible.

You can use the Filter icon to customise your view. To maintain a personalised experience, Cognos® Analytics saves your selection in local storage or by using the cached value.

This enhancement also comes with upgraded filter labels, which reflect the current filter value, for example: Showing All Templates, Showing Report Templates, or Showing Active Report Templates.

Manage queries in the report cache:

You can manage which data queries are included in the report cache to control report performance.

For more information on the report cache, see Caching Prompt Data.

For example, queries to data sources that cannot be accessed by all users, user-dependent, might degrade the report performance.

You can exclude report performance-degrading queries from cached prompt data by setting the value of the Report cache property to No in the query property pane:

-

In the navigation menu, click Report, then Queries in the drop-down menu.

-

In the Queries pane, select a query.

-

In the Properties pane, in the QUERY HINTS section, click the Report cache property.

-

Select one of the following values:

-

Default - the query is included in the report cache

-

Yes - equivalent to the Default value.

-

No - the query is excluded from the report cache.

For multi-level queries, this value is transferred from the lowest-level to the highest-level query.

PostgreSQL audit deployment and model:

The 12.1.1 release comes with a new capability for enhanced auditing and reporting in environments that use PostgreSQL as the auditing database.

You can use a dedicated Framework Manager model and a deployment package to run reports against a PostgreSQL audit database. These resources provide a structure for analysing the audit data and creating insightful reports.

You can access the new samples in the following locations within your installation directory:

<installation>/samples/Audit_samples/Audit_Postgres

<installation>/samples/Audit_samples/IBM_Cognos_Audit_Postgres.zip

To use the PostgreSQL audit samples, make sure to create a data source connection named Audit_PG.

Master detail relationships with 11.1 visualisations:

You can use 11.1 visualisations in master detail relationships to present details for each master query item in a consolidated, insightful way.

For more information on master detail relationships, see Master detail relationships.

For the 11.1 visualisations as the detail objects, you can now choose if the same automatic value range is used in all visualisation instances in a master detail relationship. You apply your choice to the Same range for all instances of the chart option. To turn this option off or on, perform the following steps:

-

Select a visualisation for which a master details relationship is created.

-

In the Data Set pane of this visualisation, click the data item that defines values on the value axis.

-

In the Properties pane, under GENERAL, click the More icon 3 dots in the filter area right of the Value range property.

-

In the Value range window:

-

Select Computed.

-

Turn off or on the Same range for all instances of the chart option, depending on whether you want to use in the instances the global extrema, the biggest value range of all instances, or the local extrema, the value range of each visualisation.

If you’re a TM1 professional and have been near the finance or FP&A world lately, you’ve probably heard the buzzword of the season: Agentic AI.

It sounds fancy and must have wondered why suddenly everyone is talking about it, but honestly, it’s just AI that doesn’t sit around waiting for you to poke it. It does things — proactively and automatically.

And when you mix that with platforms like IBM Planning Analytics / TM1, things start getting interesting.

So… What Exactly Is Agentic AI?

Imagine if your TM1 rules, processes, and chores had a brain.

Not just “if X then Y”, but something that can:

-

Notice something’s off

-

Decide what to do

-

Do it

-

Tell you what it did

-

Learn from the outcome

That’s, in a nutshell, agentic AI in the TM1 paradigm.

Think of it as giving your FP&A stack its own mini team member — minus the coffee breaks or the usual shenanigans that you’ve to bear with daily.

In practical terms, agentic AI can help rather than just be a buzzword decoration floating around in everyone’s LinkedIn posts or formal/informal conversations.

I like to highlight below a few basic things - yet very important – that agentic AI is really good at doing:

1. Automated Data Babysitting (Finally!)

Every TM1 admin knows the pain: source system changes, missing records, late files… chaos.

Agentic AI can:

- Watch data pipelines for delays

- Fix formatting issues on the fly for your TI process

- Alert you before the morning refresh explodes

Basically, your nightly chore is that you just hired an assistant.

2. “Hey, Something’s Wrong” Alerts (That Make Sense)

Instead of a typical TM1 process error message that looks like it was written in 1995, agentic AI can:

- Spot outliers, bad allocations, weird spikes – something you would do manually otherwise

- Compared to historical patterns

- Tell you, in plain English, why it’s weird

Something along the lines of:

“Hey, sales in APAC are 4x higher than normal for Mondays. It could be a missing filter. Want me to check?”

Yes, please.

3. Forecasting That Doesn’t Feel Like Guesswork

Sure, TM1 can forecast, and it can predictive forecast really well.

But agentic AI can simulate scenarios on its own and recommend the best one.

Examples:

- Auto-build 20+ what-if scenarios

- Rank them based on risk or probability

- Push the best one straight into a cube

It’s like giving your CFO a crystal ball… a slightly nerdy one.

4. TM1 Admin Tasks… Done Automatically

This is the part TM1 developers love.

Agentic AI can:

-

Fix failing processes

-

Rewrite TurboIntegrator code

-

Clean up unused object

-

Suggest how to reduce the cube size

Admittedly, given it's all subjective, and it's easier said than done, but the possibilities do exist with the more quality data we can ingest and the more we can train the model.

5. Natural Language Access to TM1

We’ve already seen this with AI chat Assistant in PAW where instead of navigating a million cubes and views, we can prompt Planning Analytics such as, “Give me gross margin by product for Q3 vs last year and show me drivers of variance.”

And it does it a fine job.

No view-building. No subset drama. No filter pain.

6. Real-time Decision Automation

Finance teams love workflows and agentic AI is perfect for building the workflows.

It loves automating those workflows.

-

Approve expenses based on policy

-

Kick off TM1 processes when thresholds hit

-

Trigger emails, Teams alerts, Slack actions

-

Update commentary automatically

So instead of actively entering the forecasts or budgets, the agent proactively taking steps to initiate those steps for you.

With time, we’re only going to see more of:

-

AI agents running close cycles

-

AI agents building dashboards

-

AI agents talking to ERP, CRM, S3, APIs without humans touching integrations

-

AI agents are debugging your model while you sleep

Why TM1 Specifically Is a Perfect Fit

As we know, TM1 is:

-

Real-time

-

Calculation-heavy

-

Highly scriptable

-

Connected to everything

-

Used for tons of repetitive work

Which is exactly the playground where agentic AI thrives.

Plus, TM1 developers are already half-cyborg 😉 with the stuff they automate — agents just take it further.

So the biggest takeaway from all of this is that Agentic isn’t coming “in the future”, it's already there! Things are definitely moving and moving at a very fast rate in this space.

It’s already sliding into FP&A tools, APIs, planning models, and the daily grind of finance teams. If TM1 was the engine, then agentic AI is the turbocharger bolted on top.

And yes — as a disclaimer, it might even finally stop your chore from failing at 3 AM for no reason 😉

Procurement teams today are under pressure to move faster, reduce risk, and operate with greater transparency. Yet contract review — one of the most critical procurement responsibilities — remains slow, manual, and highly inconsistent across most organisations.

A major enterprise client came to Octane facing exactly this challenge. Their procurement function was overwhelmed: contracts were buried in inboxes, reviews took hours, and comparing updated versions created delays and negotiation blind spots.

Octane delivered a powerful, AI-enabled solution using IBM WatsonX Orchestrate — combining a Contract Analyser with an intelligent Ask Procurement interface. Together, these capabilities have redefined how the client manages contract intake, review, insights, and procurement intelligence at scale.

The Business Challenge

The client’s procurement team was experiencing significant bottlenecks:

-

Contract overload and inbox chaos

Supplier agreements arrived via email and were often lost or delayed, slowing downstream purchasing decisions.

-

Time-consuming manual analysis

Procurement staff could spend 1–3 hours per contract summarising content, identifying risks, and preparing commentary for stakeholders.

-

Difficulty comparing contract versions

Updated supplier contracts required line-by-line manual comparison, often leading to missed red flags and weaker negotiation leverage.

-

Limited visibility into procurement insights

Leaders had no quick way to query procurement data, trends, supplier risks, or anomalies.

These issues created avoidable risk, slowed procurement cycles, and stretched team capacity.

The Octane Solution: AI-Enabled Contract Analyser + Ask Procurement

Octane deployed a streamlined, automated solution powered by IBM WatsonX Orchestrate that addresses both contract processing and procurement intelligence.

✔ AI Contract Analyser

The analyser automatically:

-

Captures new supplier contracts the moment they appear in email

-

Extracts and understands contract text

-

Summarises key clauses and obligations

-

Identifies risks, red flags, and missing components

-

Highlights differences between contract versions

-

Generates a negotiation playbook

-

Delivers insights to stakeholders instantly

This means procurement teams no longer read contracts line-by-line — the AI does the heavy lifting.

✔ Ask Procurement: AI interface for procurement intelligence

As part of the deliverable, Octane introduced Ask Procurement, a conversational AI interface that allows users to:

-

Query procurement data

-

Identify spend trends

-

Detect anomalies in contracts or vendors

-

Access historical contract insights

-

Surface negotiation patterns

-

Review supplier performance indicators

Whether it’s “Show me all suppliers with auto-renewal clauses” or “Summarise risk trends for our top five vendors,” Ask Procurement provides instant answers.

Together, these tools create a true digital procurement co-pilot.

The Impact for the Client

The benefits have been significant and immediate:

-

Review time reduced to under a minute

What previously took hours now happens automatically — contracts are analysed, summarised, and compared in seconds.

-

Reduced legal and commercial risk

The AI produces a structured risk register, helping teams spot issues earlier and make more informed decisions.

- Stronger negotiation positions

The system highlights:

-

What changed between versions

-

Why it matters

-

Recommended negotiation arguments

This gives the procurement team a consistent, data-driven advantage.

-

Faster procurement cycle times

Automated intake and instant insights have removed bottlenecks, improving:

- Supplier onboarding

- Purchase approvals

- Contract turnaround times

-

No more lost contracts

The AI automatically captures, stores, and processes every attachment.

-

Improved organisational intelligence

With Ask Procurement, leaders now have:

-

Instant visibility

-

Searchable procurement knowledge

-

On-demand insights

-

Clear trend analysis

This shifts procurement from reactive to proactive.

Why This Matters

This project demonstrates what applied enterprise AI looks like in the real world — practical, operational, and immediately beneficial.

It shows how organisations can:

-

Modernise procurement without replacing systems

-

Automate high-effort tasks with intelligent workflows

-

Strengthen compliance and governance

-

Provide teams with insights previously locked away in documents

-

Use AI as an everyday digital procurement analyst

It also reinforces that AI is not only for futuristic use cases — it is delivering meaningful value today.

What’s Next: Full Lifecycle Automation with E-Signature

The next extension is already underway:

AI-driven e-signature workflows, enabling:

-

Automated signing

-

Routing and approval

-

Audit trails

-

Archiving and version control

This will close the loop across the entire procurement lifecycle:

Intake → Review → Insights → Decision → Signature → Storage

Conclusion

Octane’s AI Contract Analyser and Ask Procurement portal offer a new way forward for procurement teams looking to accelerate productivity, reduce risk, and enhance decision-making.

By combining IBM WatsonX Orchestrate, structured AI reasoning, and deep procurement expertise, Octane has delivered a real-world, production-ready solution that transforms how contracts — and procurement intelligence — are managed at scale.

If you'd like to explore how this could work inside your organisation, the Octane team is ready to demonstrate what’s possible.

In today’s volatile business landscape, agility is no longer a competitive advantage—it’s a necessity. True agility means moving beyond fast response to actively anticipating market shifts and seamlessly aligning people, processes, and technology to act decisively.

.png?width=595&height=341&name=From%20Insight%20to%20Impact%20(1).png)

Enterprises possess vast troves of data, yet the ultimate differentiator is the ability to transform that data into actionable insights and automated, intelligent decisions. At Octane Analytics, we are driving this transformation across industries by evolving disconnected reporting tools into a unified, intelligent ecosystem powered by IBM's premier analytics and AI platforms.

The Unified Framework for Intelligent Decisions

IBM’s comprehensive suite of solutions—including Planning Analytics, Cognos Analytics, SPSS, Decision Optimisation, Controller, and Watsonx Orchestrate—delivers a connected framework that manages business performance from strategic vision through to operational execution. This integration establishes a data-to-decision continuum where insights fluidly integrate into planning, execution, and automation cycles.

- IBM Planning Analytics moves organisations beyond static budgeting to dynamic, driver-based forecasting and scenario modelling.

- IBM Cognos Analytics empowers business users with AI-driven dashboards and visualisation tools for deep insight exploration.

- IBM SPSS integrates statistical precision and data science into business planning, ensuring predictions are rooted in reliable data, not intuition.

- IBM Decision Optimisation models complex business scenarios to identify the most efficient and optimal outcomes.

- IBM Controller simplifies and automates financial consolidation, closing, and regulatory reporting.

- IBM Watsonx Orchestrate enables non-developers to automate repetitive workflows, directly connecting insights to business action without writing code.

The Pivot to Predictive and Prescriptive Analytics

Many organisations remain reactive, focused on analysing "what happened." The step-change in performance occurs when analytics shift to answering the crucial questions: “what will happen?” (Predictive) and “what should we do about it?” (Prescriptive).

The integrated IBM ecosystem facilitates this critical shift:

-

Prediction Informs Strategy: Predictive models built in SPSS directly inform forecasts within Planning Analytics, making financial and operational plans immediately responsive to market shifts.

-

Prescription Optimises Action: Decision Optimisation identifies the best sequence of actions to achieve a business goal, operating within specified constraints.

-

Automation Operationalises Insight: Watsonx Orchestrate then automates the prescribed follow-up actions—whether triggering workflows in HR, Finance, or Operations—significantly boosting responsiveness and reducing manual workload

This synergy elevates the organisation from merely data-driven to decision-driven, where insights are not just observed but fully operationalised.

AI and Automation: Transforming Finance and Operations

Automation is no longer confined to the IT department. Today, modern CFOs, HR executives, and department leaders are leveraging agentic AI to offload repetitive, high-volume tasks and achieve new levels of efficiency.

Consider the impact across key functions:

- Financial Performance Management: Imagine a Finance Manager who automatically receives consolidated reports prepared by the IBM Controller, reviewed with AI-assisted insights from Cognos Analytics, and validated against dynamic budget forecasts from Planning Analytics.

- Intelligent HR Operations: A People Leader uses Watsonx Orchestrate to streamline repetitive HR tasks—from scheduling interviews and summarising resumes to ensuring records are instantly updated across all ERP systems.

At Octane Analytics, we specialise in designing and deploying these agentic AI ecosystems, ensuring automation amplifies human capability and drives measurable outcomes.

Why Choose Octane Analytics?

As an IBM Gold Partner, Octane Analytics offers deep, specialised expertise in integrating and optimising IBM’s entire performance management stack.

Our approach is centred not just on product deployment, but on measurable business outcomes: enhanced agility in planning, increased accuracy in forecasting, greater efficiency in reporting, and empowerment through automation.

Whether your immediate need is strategic financial consolidation or a full-scale enterprise performance management overhaul, our team provides the expertise to define the roadmap, deliver the integrated solution, and ensure a demonstrable Return on Investment (ROI).

The Future: A Connected, AI-Powered Enterprise

The future of enterprise performance hinges on connected intelligence—an environment where AI and analytics continuously learn, adapt, and act across all business functions.

Organisations that master this integrated, AI-first approach will not only achieve operational efficiency but also build unparalleled resilience and foresight in a rapidly changing global market. At Octane Analytics, we are committed to helping enterprises realise this future, one intelligent decision at a time.

Let’s Build the Intelligent Enterprise Together

If you are exploring how integrated AI, advanced analytics, and automation can significantly elevate your business performance, we invite you to connect with us. Our team can provide tailored, real-world use case demonstrations—from predictive planning to automated workflow execution—all powered by IBM’s market-leading technology.

📩 Reach out to Octane Analytics today to schedule a discovery session.

In a recent project with a leading media company in Australia, we set out to demonstrate how IBM Watsonx Orchestrate can revolutionise finance operations through the power of Generative AI. The Commercial Finance team, under constant pressure to deliver timely, accurate and insight-rich reports, needed a smarter way to move beyond manual data wrangling and deliver executive-ready outputs in record time.

.png?width=530&height=304&name=Alan%20blog%20(1).png)

That’s where WatsonX Orchestrate came in. Unlike traditional BI or workflow automation tools, Watsonx Orchestrate leverages Generative AI to not only automate repetitive tasks but also to interpret, contextualise and generate meaningful outputs. The result is a system that empowers both analysts and executives to act faster, with confidence, while minimising human bottlenecks.

Automating Financial Report Generation

Key Capabilities:

-

Automated extraction, transformation and loading (ETL) of data from a data warehouse.

-

Automated generation of third-party and monthly executive summary reports.

-

AI-driven identification of key events influencing financial outcomes.

-

Analyst verification loop to ensure accuracy and compliance.

Business Impact:

-

Reports created in minutes rather than weeks.

-

Reduced data duplication and inconsistencies.

-

Analysts free to focus on high-value strategic analysis.

-

Executives receive timely, validated insights for faster decision-making.

Self-Service Financial Insights

Key Capabilities:

-

A bespoke AskFinance portal enabling natural language queries.

-

Secure access aligned with role-based permissions.

-

Pre-trained CFO scenarios to simulate executive decision contexts.

-

Integrated visualization tools for interactive reporting.

Business Impact:

-

Executives gain independence in accessing financial data.

-

Real-time insights without reliance on BI analysts.

-

Streamlined reporting across departments and report types.

-

Forecasting and scenario modeling made simple, accurate and quick.

Use Cases in Action

Producing Monthly YTD Monetisation Reports: Automating the calculations behind key metrics, seamless PowerPoint slide generation, clean, consistent reporting outputs in a standardised format.

Delivering Monetisation Insights: Automated chart creation and AI-driven callouts, generative commentary highlighting anomalies or areas needing attention, a natural language interface to query insights and commentary directly.

Tangible Benefits

-

~60% ROI: Analysts reallocated to higher-value activities, reducing attrition costs.

-

~99% efficiency gains: Manual reporting reduced to near-zero.

-

2 weeks → 10 minutes: End-to-end report creation compressed dramatically.

-

Improved data quality: Automated reconciliation reduces inconsistencies and errors.

-

Scalability: Built to handle larger datasets and evolving financial needs.

Beyond Media: Industry Relevance

The use case resonates strongly across industries, such as airlines, where BI Analysts and Finance teams spend significant time manually preparing and reconciling data. In one example, reliance on IBM Planning Analytics was slowing executive decision-making as stakeholders had to wait for analysts to deliver real-time data insights.

Watsonx Orchestrate bridges this gap by delivering:

Automation of complex financial workflows.

Generative insights at scale.

Democratisation of access to financial intelligence.

Curious how Agentic AI could reshape your finance operations? Let’s start a conversation tailored to your requirements.

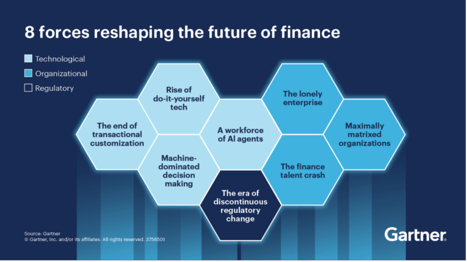

The 8 Forces Reshaping the Future of Finance – and How Agentic AI Helps CFOs Lead

Gartner has pin pointed 8 disruptive forces set to fundamentally transform the finance function. These changes—spanning technological advancements, organisational shifts, and regulatory upheavals- pose both risks and opportunities for CFOs. Success will belong to those who leverage Agentic AI, such as WatsonX Orchestrate, and Extended Planning & Analytics, like IBM Planning Analytics, to not merely adapt but to lead the transformation. Finance is standing at a critical juncture. Gartner emphasises that the role of finance is evolving from historical reporting to actively shaping the future of the business.

To lead in this new landscape, CFOs require more than automation. They need Agentic AI, like IBM Watsonx Orchestrate, to operate seamlessly across workflows and Extended Planning & Analysis (xP&A), such as IBM Planning Analytics, to serve as a unified, intelligent source for forecasting, scenario planning, and decision-making.

Together, these platforms form a new operational foundation for finance, striking a balance between cost efficiency, agility, governance, and innovation.

1. A Workforce of AI Agents

The Challenge: By 2027, one-third of enterprise software will embed Agentic AI. Finance tasks once performed manually will be supervised and executed by autonomous agents, driving exponential efficiency.

The Solution:

-

Watsonx Orchestrate deploys AI agents that autonomously reconcile data, build “what-if” scenarios, or flag exceptions across ERP, CRM, and finance platforms.

-

These agents don’t just predict outcomes; they act — re-routing approvals, generating reports, and escalating high-value tasks.

2. Machine-Dominated Decision Making

The Challenge: By 2028, 70% of finance functions will rely on AI-powered real-time decisioning. Human-led bottlenecks will give way to AI-enhanced scenario modelling and automated choices.

The Solution:

-

Planning Analytics creates driver-based models that focus on variables that truly move the business (e.g., unit margins, demand drivers, or tariff costs).

-

Watsonx Orchestrate translates these models into actions, running multiple scenarios in parallel and surfacing recommendations with governance and audit trails.

The Outcome: CFOs can make confident decisions faster — automating routine trade-offs while freeing analysts to stress-test strategy.

3. Rise of Do-It-Yourself Tech

The Challenge: Low-code and no-code platforms will see $41B in spend by 2028, enabling finance to become digitally self-sufficient.

The Solution:

-

Planning Analytics provides a governed sandbox for FP&A teams to run ad-hoc models, ensuring agility without fragmenting data integrity.

-

Watsonx Orchestrate acts as the connective tissue, pulling insights into workflows and presenting results conversationally.

The Outcome: True finance self-sufficiency — teams empowered to experiment and run scenarios, without losing enterprise-wide consistency.

4. The End of Transactional Customisation

The Challenge: By 2030, most finance functions will converge on identical transactional processes. Differentiation will come from insights and agility, not customisation.

The Solution:

-

Watsonx Orchestrate automates repetitive, non-differentiating processes (invoice matching, close cycles, reconciliations).

-

Planning Analytics ensures finance value lies in insight and foresight, not transactions — embedding real-time planning across the enterprise.

The Outcome: Finance becomes a growth engine, not a cost centre, investing resources in innovation and transformation rather than maintenance.

5. The Lonely Enterprise

The Challenge: Self-service tech adoption (20–50% penetration in 2 years) will push analysis out of finance and into the business.

The Solution:

-

Planning Analytics creates a living model of assumptions, policies, and KPIs.

-

Watsonx Orchestrate enables agents to auto-generate compliance reports, simulate regulatory impacts, and escalate issues proactively.

The Outcome: CFOs can stay ahead of regulators, ensuring confidence in disclosures and agility in response, without ballooning compliance costs.

6. Maximally Matrixed Organisations

The Challenge: By 2030, large enterprises will become increasingly matrixed — characterised by complex reporting lines, distributed decision-making, and cross-functional dependencies. While this model allows global scale, it comes at a cost: decision-making slows down, bottlenecks multiply, and finance often becomes the bottleneck rather than the enabler. Gartner predicts a significant reduction in corporate decision speed due to this complexity.

How CFOs Stay Agile with IBM

-

Watsonx Orchestrate cuts across silos by deploying AI agents that integrate data from disparate systems (ERP, CRM, HR, supply chain). These agents autonomously synthesise inputs, flag bottlenecks, and propose actions without waiting for endless email chains or manual escalations.

-

Planning Analytics provides a single source of truth across geographies and business units, enabling finance teams to run real-time, driver-based scenarios that reflect the complexities of a matrixed structure.

The Outcome: CFOs regain speed and agility. Instead of being trapped in the complexity of governance and approvals, decisions are powered by cross-system insights, actionable in minutes rather than weeks. Finance evolves into the “accelerator” in a maximally matrixed enterprise.

7. The Finance Talent Crash

The Challenge: The finance profession is heading toward a talent crunch. Demand for digital, analytical, and AI skills is skyrocketing, but the supply of finance professionals with this hybrid capability is scarce. Meanwhile, much of finance talent remains locked in repetitive tasks like reconciliations, reporting, and compliance — jobs that do little to attract or retain the next generation.

How IBM & Octane Mitigate the Crash

-

Agentic AI (Watsonx Orchestrate) automates routine, manual workflows such as reconciliations, reporting prep, and document processing. By doing so, it frees scarce talent to focus on strategic work: forecasting, scenario planning, and advising the business.

-

Planning Analytics amplifies finance professionals’ value by equipping them with tools to run advanced models, predictive forecasts, and multi-scenario analysis.

-

Octane’s AI Adoption Workshops (delivered in partnership with IBM) provide hands-on reskilling for FP&A teams. These workshops ensure finance professionals transition from “spreadsheet operators” to strategic analysts who understand both the business and the AI tools that power it.

The Outcome: CFOs can do more with less. Talent is not just retained but re-energised, focused on high-value activities that align with business growth. The talent gap becomes an opportunity: finance professionals become champions of digital transformation rather than casualties of automation.

8. The Era of Discontinuous Regulatory Change

The Challenge: Regulatory landscapes are evolving faster than ever. From ESG disclosures to cross-border tax regimes and industry-specific compliance requirements, CFOs face a constant barrage of discontinuous, unpredictable regulatory changes. Manual compliance frameworks can no longer keep pace, exposing firms to risk and spiralling costs of control.

How Watsonx Orchestrate & Planning Analytics Support

-

Watsonx Orchestrate embeds governance and compliance into every workflow. AI agents automatically generate audit trails, monitor transactions for anomalies, and escalate risks before they become issues. Instead of building compliance after the fact, governance becomes native and continuous.

-

Planning Analytics enables finance to run regulatory impact scenarios in real time — modeling, for example, how a new ESG disclosure requirement might affect capital allocation or how new tax rules impact profitability by geography.

-

Combined, they give CFOs the ability to adapt instantly, ensuring compliance while keeping costs under control.

The Outcome: Regulatory change becomes less of a disruption and more of a strategic advantage. CFOs can demonstrate resilience to boards and regulators, protecting reputation while ensuring agility.

Adaptive Scenario Planning: Why This Matters Now

The real battleground for CFOs is scenario planning. Traditional methods are too slow for today’s volatility. Adaptive approaches — powered by AI — allow finance leaders to:

-

Run rolling forecasts updated daily, not quarterly.

-

Build driver-based models that respond instantly to tariffs, FX rates, or demand shocks.

-

Generate multiple scenarios in real time and attach clear contingency playbooks.

-

Show investors not just one “answer,” but a strategic range of preparedness.

Here’s where the synergy between Planning Analytics and Watsonx Orchestrate is critical:

-

Planning Analytics ensures the data model, drivers, and assumptions are clean, integrated, and ready for real-time updates.

-

Watsonx Orchestrate enables CFOs to simply ask, “How does a 5% tariff change impact margin by region?” and instantly receive scenario outputs — plus trigger next steps (e.g., adjust budgets, reschedule supplier contracts).

The CFO’s Leadership Imperative

The forces reshaping finance — from matrixed complexity to talent shortages to regulatory turbulence — are daunting. But they also present a unique opportunity. CFOs who embrace Agentic AI today won’t just adapt to disruption; they’ll lead it.

With IBM Watsonx Orchestrate (Agentic AI) and IBM Planning Analytics (xP&A), the Office of Finance can:

-

Automate: Cut month-end close cycles by 3× while reducing manual errors.

-

Anticipate: Run real-time “what-if” scenarios with confidence, powered by driver-based models.

-

Adapt: Stay compliant amid discontinuous regulatory change with embedded audit trails and anomaly detection.

-

Amplify: Re-deploy scarce finance talent into strategic, growth-focused roles.

The message is clear: The 8 forces will reshape finance — but with Agentic AI, CFOs can lead the disruption, not be disrupted.

The Payoff: Efficiency Meets Innovation

When finance leaders integrate these technologies, the results are dramatic:

-

99% faster reporting – weeks of manual effort compressed into minutes.

-

3× faster close cycles – freeing capacity for forward-looking analysis.

-

60% ROI in Year One – cost savings plus strategic impact.

-

Cultural transformation – finance staff moving from routine tasks to high-value thinking: experimentation, scenario testing, and strategic advising.

Why Partner with Octane

Transformation isn’t just about technology; it’s about execution. That’s where Octane makes the difference, you’ll hear how leaders from IBM, Rinnai Australia, and Octane are already using AI to unlock efficiency, cut manual reporting by 40+ hours a week, and even accelerate M&A integration. Watch the recording:

- AI Adoption Workshops: Delivered in partnership with IBM, Octane’s workshops provide hands-on reskilling for FP&A teams. These ensure finance professionals transition from “spreadsheet operators” to strategic analysts who understand both the business and the AI tools that power it.

- Fixed-Price Upgrade Offer: Octane can modernise your xP&A platform on a fixed-price basis after just a 2-hour technical workshop with your team.

- AI in Finance Use Cases: In parallel, after a 2-hour strategic workshop with your finance leadership, Octane will deliver two AI use cases tailored to your business — so you see tangible value in weeks, not months.

CFOs are no longer just guardians of cost, they are champions of transformation.

With Watsonx Orchestrate and Planning Analytics, powered by Octane’s delivery expertise, you can accelerate value in 6–8 weeks: modernise your platform, reskill your teams, and embed AI use cases that pay back immediately.

Bring your own Use Case

Bring to life your own use case that generates business value to your organisation with the help of our team of AI experts.

Talk to us!

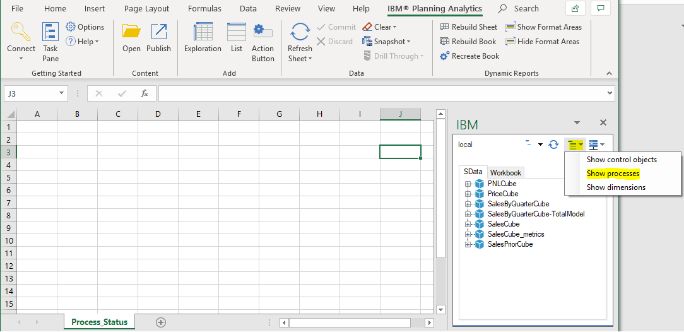

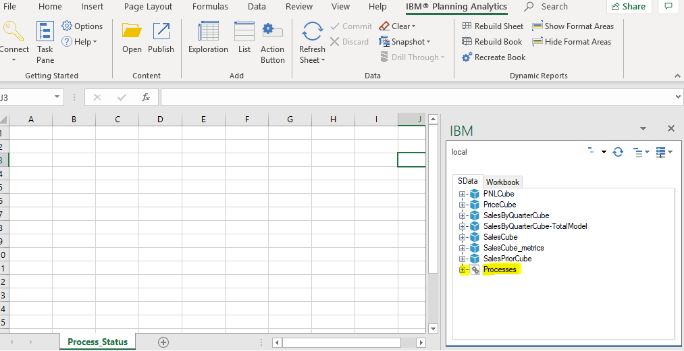

IBM Planning Analytics has introduced new features that make development and administration tasks much easier. Two of the most impactful improvements are the ability to see variable values while debugging TI processes and the enhanced Database Explorer.

1. Hover to See Variable Values in TurboIntegrator Debugger

Debugging TI processes used to mean adding log statements and rerunning processes just to see variable values. With the new hover help, simply move your mouse over a variable in the debugger, and its value is displayed (e.g., sCube = 'Asset_Input').

✅ Benefit: Makes debugging much faster, eliminates extra logging, and helps you quickly confirm whether variables are behaving as expected.

Figure 1: Hovering over a variable shows its value instantly in the TI debugger

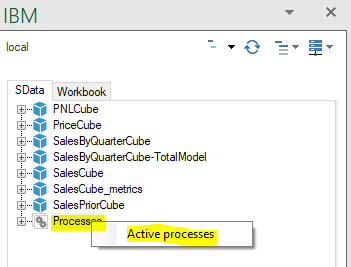

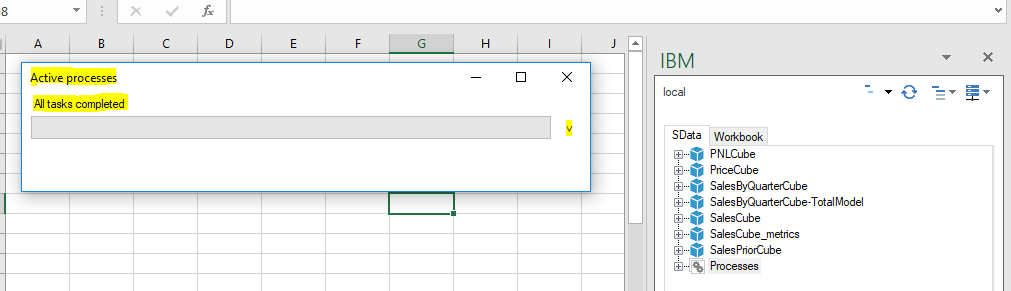

2. Database Explorer: A Smarter Way to Navigate Your Environment

Managing a Planning Analytics server or instance often involves checking how many objects exist—be it cubes, dimensions, processes, chores, or control objects. Previously, administrators and developers had to dig through folders or rely on TI scripts to gather this information. Now, with the Database Explorer, everything is accessible in one clean interface.

Key features include:

- Quick Object Counts: Instantly see how many cubes, dimensions, processes, and chores are available.

- Process Data Source Types: Displays what data source a process is using (e.g., Cube, ODBC, or 'No data source').

- Organised View: Objects are grouped into categories, reducing clutter and making navigation straightforward.

- Centralised Actions: Access logs, import/export, manage users, refresh security, or check server version from one place.

✅ Benefit: The Database Explorer improves transparency and efficiency, helping both administrators and developers work faster by providing a unified view of objects and their data sources.

Figure 2: Navigation through the Database Explorer menu

Figure 3: Object counts displayed in Database Explorer

Figure 4: Shows Data source when clicked on Processes

Small Changes, Big Impact

These updates may seem minor, but they greatly improve user productivity. From instantly checking variable values in debugging to exploring databases more efficiently, IBM Planning Analytics is now smarter and more user-friendly.

In an age where AI is often synonymous with machine learning, one of the most powerful, but often overlooked, tools in the AI toolbox is optimisation. At the heart of many real-world, high-stakes decisions lies a mathematical engine built to deliver the best possible outcome. And IBM ILOG CPLEX continues to be that engine of choice.

As we move deeper into 2025, one hot trend is hybrid AI, the combination of predictive models with prescriptive optimisation. Why predict what might happen if you can also decide what should happen? That’s where CPLEX shines.

Real-world impact: From supply chains to smart grids

Whether it’s dynamically routing fleets, allocating resources under uncertainty, or scheduling energy consumption during peak hours, organisations are leveraging CPLEX not just as a solver, but as a strategic decision engine.

Here are a few standout use cases:

-

Retail & E-Commerce: Predicting customer demand using ML, then using CPLEX to optimise fulfilment across a decentralised warehouse network.

-

Utilities: Combining real-time sensor data with CPLEX-based scheduling to balance load in smart grid systems.

-

Finance: Creating portfolio allocations that meet regulatory requirements and maximise return, all while adapting to market volatility.

Cloud-native optimisation: Scaling with IBM Cloud Pak for Data

Another major shift? Optimisation in the cloud. IBM's Cloud Pak for Data is helping companies operationalise CPLEX models in ways that were unimaginable just a few years ago. Think seamless integration with data lakes, real-time dashboards, and API-first deployment models.

Why It Matters

As business environments grow more complex, the ability to make data-driven, optimal decisions in real-time becomes a true competitive advantage. CPLEX brings mathematical certainty to uncertain times, and when combined with machine learning, it offers a full-spectrum AI approach that’s both predictive and prescriptive.

Are you exploring optimization as part of your AI strategy? Let’s connect, happy to exchange thoughts on where prescriptive analytics is heading next.

💬Talk to us: media@octanesolutions.com.au

What is ASCIIOutputOpen

In IBM Planning Analytics (TM1), TurboIntegrator (TI) processes are essential for automating data operations. One of the most useful functions in TI scripting is ASCIIOutputOpen, which allows you to open a file for writing ASCII data. Whether you need to create a new file or append data to an existing one, this function provides the flexibility to control file access and modifications efficiently.

Key Features of ASCIIOutputOpen

-

Append or Overwrite: Choose whether to overwrite an existing file or add new data to the end.

-

Shared Read Access: Enable other processes or users to read the file while it’s being written.

-

Supports Multiple File Types: Works seamlessly with .csv and .txt files, making it ideal for various data export needs.

Syntax Breakdown

The basic syntax for ASCIIOutputOpen is:

ASCIIOutputOpen(FileName, OpeningMode);

Parameters Explained

-

FileName

-

The full path and filename (including extension) where data will be written.

-

Example: "C:\Data\Report.csv"

-

-

OpeningMode

-

A numeric code that determines how the file is accessed.

-

|

Mode |

Description |

Behaviour |

|

0 |

Overwrite without shared read access |

Creates or overwrites the file; no other process can read simultaneously. |

|

1 |

Append mode without shared read access |

Adds data to the end of the existing file; no sharing. |

|

2 |

Overwrite, shared read access enabled |

Overwrites if the file exists; allows other processes to read concurrently. |

|

3 |

Append, shared read access enabled |

Adds data to the end; allows other processes to read the file simultaneously. |

Related Functions

For more granular control, you can also use:

-

FILE_OPEN_APPEND() – Opens a file in append mode.

-

FILE_OPEN_SHARED() – Opens a file with shared read access.

Combining these functions can provide finer control over file operations.

Practical Examples

Example 1: Overwriting a File with Shared Read Access

If you want to generate a new CSV report (overwriting any existing version) while allowing others to read it:

ASCIIOutputOpen("C:\\Reports\\SalesData.csv", 2);

Example 2: Appending Data with Shared Access

If you need to add new records to an existing file without locking it:

ASCIIOutputOpen("C:\\Reports\\SalesData.csv", 3);

Conclusion

ASCIIOutputOpen is a powerful function in TurboIntegrator that helps manage file exports efficiently. By understanding its different modes, you can ensure seamless data operations—whether you're generating reports, logging data, or integrating with external systems.

Pro Tip: Always verify file paths and permissions before running TI processes to avoid errors!

Have you used ASCIIOutputOpen in your projects? Share your experiences in the comments! 🚀

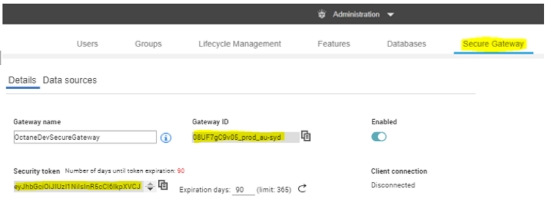

Looking for ways to monitor the health and status of your IBM Planning Analytics (PA) Application and Server?

Here are some methods for automating monitoring and receiving alerts whenever issues arise in the backend of your PA applications.

Before enabling these alerts, it's important to understand the key areas to monitor. Monitoring these aspects ensures your PA applications remain healthy, stable, and optimised for performance.

NOTE: Your Access role should be Administrator to view and perform all the below.

1. Database Health Monitoring

To assess the health of your PA applications and databases, follow these steps:

1. Log in to IBM Planning Analytics Workspace.

2. Navigate to Administration and click Databases.

3. Under Databases, select the desired PA application.

4. On the right-hand side of the page, click on Details to view the status and health metrics.

This provides a quick overview of the database’s performance and any potential issues that may require attention.

Sample Screenshot:

You will see various status icons that indicate the current health of the PA application. Here's what each icon represents:

![]() Indicates that the PA application is healthy and running without any issues.

Indicates that the PA application is healthy and running without any issues.

![]() Indicates that the PA application is at risk of moving into a critical state. Proactive attention may be required.

Indicates that the PA application is at risk of moving into a critical state. Proactive attention may be required.

Indicates that the PA application is in a critical state and may potentially

Indicates that the PA application is in a critical state and may potentially

lead to a system failure or downtime if not addressed immediately.

To set up automatic alerts for your PA application:

1. On the right-hand side of the application's detail page, click on Alerts.

2. From there, you can configure the threshold values that will trigger alerts based on system performance or issues.

3. To enable the Alerts, click on the respective ![]() button, which changes to

button, which changes to ![]() indicate it's enabled.

indicate it's enabled.

This allows proactive monitoring by notifying you when predefined conditions are met.

Sample Screenshot:

5. We can define the Warning threshold and Critical threshold values based on the size and memory utilised by the PA application under stable conditions.

6. Apart from that, we have options to define Critical Threshold values for factors such as –

-

Max thread wait time: we can set the Critical Threshold for maximum thread wait time for the respective PA Application to make sure the PA instance is not slowing down as we can kill thread as soon as possible.

-

Thread in run state: we can set the Critical Threshold to make sure the threads are not in Run state for more than expected in the respective PA Application, which has the possibility of slowing down the server.

-

Database unresponsive: we can set the Critical Threshold to note if Database/PA application is not responsive which helps us to action it as soon as possible.

6. We can enable the Database Shutdown Alert to enable notifications on the PA Application Stop/Downtime and Start/Restart activity.

7. We can add multiple email IDs to receive the notifications of the enabled Alerts, separated by a comma (‘, ’) in the Notify email IDs text box.

8. Click Apply to save the change made to the Alerts.

2. Agent/PA Server Health Monitoring

To assess the health of your PA Server/Agent, follow these steps;

1. Log in to IBM Planning Analytics Workspace.

2. Navigate to Administration and click Databases.

3. Under Agents, select the desired Agent.

4. On the right-hand side of the page, click on Details to view the status and health metrics.

This provides a quick overview of the database’s performance and any potential issues that may require attention.

Sample screenshot:

To set up automatic alerts for your PA Server:

1. On the right-hand side of the application's detail page, click on Alerts.

2. From there, you can configure the threshold values that will trigger alerts based on system performance or issues.

3. To enable the Alerts, click on the respective ![]() button, which changes to

button, which changes to ![]() indicates it's enabled.

indicates it's enabled.

Sample Screenshot:

4. We can define the Warning threshold and Critical threshold values based on the size and memory utilized by the PA application on stable conditions.

5. We can add multiple emails IDs to receive the notifications of the enabled Alerts separated by a comma (‘ , ’) in the Notify email IDs text box.

6. Click Apply to save the change made to the Alerts.

The need for agile financial planning

In today’s rapidly evolving business landscape, organisations face unprecedented volatility, supply chain disruptions, fluctuating demand, inflationary pressures, and geopolitical uncertainties. Traditional financial planning methods, reliant on static spreadsheets and manual processes, are no longer sufficient. Businesses need real-time insights, predictive foresight, and the ability to pivot quickly in response to changing conditions.

Enter IBM Planning Analytics with Watson’s AI Assistant, a cutting-edge solution that combines multidimensional modelling with conversational AI. This solution is transforming how enterprises approach budgeting, forecasting, and performance management. This isn’t just an incremental improvement; it’s a paradigm shift in financial planning and analysis (FP&A).

.png?width=488&height=281&name=Future%20of%20finance%20(1).png)

What is IBM Planning Analytics with AI Assistant?

IBM Planning Analytics, built on the powerful TM1 engine, has long been recognised for its:

-

In-memory computing for lightning-fast calculations

-

Multidimensional modelling for complex scenario analysis

-