Many businesses have already turned to Octane and partnered with us to help turn their data into meaningful insights. So if you've wanted to connect your Planning Analytics to Power BI, you're not alone and now with us you can. Octane has developed a way you can work directly with IBM Planning Analytics (powered by TM1) and Microsoft Power BI! We've had a number of clients who have wanted to ...

- Planning Analytics and PowerBI

- What's new in Cognos Analytics 12.1.x

- Transform Enterprise Performance with IBM Analytics and AI Solutions

- The CFO's AI Playbook : From Ah-Ha to Acceleration

- The new age of analytics: How IBM SPSS is powering responsible AI in 2025

- Unveiling Dynamic Lists in IBM Planning Analytics for Excel

Dashboards:

Distinction between Display and Use value in dashboards:

You can now define Display and Use values in data modules.

The Display values are the values that you can see in a dashboard UI; the Use values are primarily for filtering logic.

Previously, defining the Display and Use values was possible only in FM packages. This feature brings the same capability to data modules and enhances consistency across dashboards and reporting. You can interact with readable values while filters apply precise underlying identifiers. For example, you can select a Customer ID value in the dashboard UI and apply a filter that is based on the Customer Name value.

Manage filter size and filter area visibility:

You can now resize filter columns and hide filter areas to improve the arrangement and visibility of these elements in dashboards.

For more information on resizing filter columns in the All tabs and This tab filter areas, see Resizing filters.

For more information on hiding and reshowing the filter areas, see Hiding and showing filter areas.

Option for users to export visualisation data to a CSV file:

You can now allow your users to export visualisation data to a .csv file.

To enable this feature, open a dashboard or a report that contains a visualisation, go to Properties > Advanced, and turn on the Allow users access to data option.

When this option is active, users can open the data tray and download the .csv file from the Visualisation data tab. Enabling this feature also adds an Export to CSV button and Export to CSV icon to the toolbar. The button is visible to the users and to the editors. If you turn off this feature, the button disappears.

Responsive dashboard layout:

The 12.1.1 release introduces a responsive layout feature for dashboards.

This feature enhances the authoring experience and usability across different devices by optimising the dashboard layout for various screen sizes, including mobile devices. You can also use it for grouping the content and organising visualisations.

To use a responsive layout, go to the Responsive tab when you create a new dashboard and select one of the available templates, as seen in the following image:

The responsive dashboard layout feature comes with the following key capabilities:

- Layout selection:

You can now choose between responsive and non-responsive layouts when you create a new dashboard.

- Adaptive widgets:

If you change the position of a panel or resize the dashboard window, the widget automatically adapts its placement and alignment.

- Intuitive resizing and swapping:

Smart alignment algorithms facilitate smooth layout transitions, while an intuitive interface makes the authoring experience smoother and more efficient.

- Drop zones for precise widget placement:

Each layout cell supports five drop zones: top, right, bottom, left, and center. You can use these zones for more control over widget placement.

- Cell deletion:

Dashboards now differentiate between empty and populated cells for accurate deletion.

- Data population:

The feature mirrors data population from the non-responsive layouts, supports drag-and-drop function, and slot item selection. If you use the copy and paste or click-add-to functions, the feature uses a smart placement logic to make sure that it adds the content to empty cells. It can also split the data between existing cells.

- Window resizing:

You can now dynamically resize a dashboard and its layout automatically adapts to the new screen size. It includes transition to a single-column or two-column layouts on smaller screens for enhanced readability.

- Printing to PDF files:

You can print the dashboard to a .pdf file in View mode and in the New Page mode.

- Nested dashboard widgets:

You can use the nested dashboard widgets as standard widgets or as containers for grouping and organising the content.

To successfully implement the responsive layout, you must make sure that the dashboard uses manifest version 12.1.1 or later and confirm widget boundaries by employing the layout grid. However, if the widgets do not render correctly, check the layout specification and verify the feature support.

Secure dashboard consumption with execute and traverse permissions:

Users can now consume dashboards with execute and traverse permissions granted to presented data, no read permission is required.

In the previous releases of IBM® Cognos® Analytics, the read permission was required for dashboards consumption. This might cause a sensitive data compromise because dashboard consumers could edit and copy such data.

Important: To strengthen the protection of data that you want to be consumed by other users, modify these users' permissions from Read to Execute and Traverse before you migrate to Cognos Analytics 12.1.1.

However, the execute and traverse permissions put some restrictions on actions that can be taken by a dashboard consumer. Therefore, the consumer cannot perform the following actions:

-

Drill up and down

-

Export

-

Narrative insights

-

Navigate

-

Open dashboards

-

Paste copied widgets into another dashboard.

-

Pin

-

Save

-

Save as a story

-

See the full data set in the data tray.

-

Share

-

Switch to Edit mode.

Personalised dashboard views:

The 12.1.1 release comes with a new feature for simplified customisation of complex dashboard designs.

A dashboard view is a feature that references a base dashboard, which contains your individual filters and settings. It supports the following customisation features:

-

Filters

-

Brushing, excluding local filters on individual visualisations

-

Bookmarks, including the ability to set the currently selected tab

You can create dashboard views only from an open dashboard and from within the dashboard studio, and only against saved dashboards. If the open dashboard is saved, a Save as dashboard view option appears in the save menu:

This operation works as a standard Save as operation. When the operation is complete, the original dashboard is still displayed. To access the new dashboard view, you must open it manually from the content navigation panel.

The dashboard views have a different icon from regular dashboards. It includes an eye overlay, which is similar to a report views icon:

You can customise a dashboard view by changing the brushing, filter, or bookmarks, and then saving the view. However, the dashboard view is essentially in a Consume mode, and you can't switch to the authoring mode. It also means that you can't access the metadata tree of the dashboard view or add extra filter controls to the filter dock. If you want your users to apply filters in a metadata column, you must first add that column to the base dashboard, even if you don't initially select any filter values.

Any updates that you make to a base dashboard automatically appear in the dashboard view, except for the custom options that you define in the dashboard view itself. You can see the changes the next time that you open the dashboard view. For example, if you delete a visualisation from the main dashboard, it no longer appears in the dashboard view.

The Save as dashboard view operation also creates a non-editable bookmark in the dashboard view. This bookmark includes the state of filters and brushing that you applied in the dashboard at the time when the dashboard view was created or last saved. When you open the dashboard view and don't select any other bookmark, this bookmark is automatically selected.

The dashboard views not only consume bookmarks from the base dashboards, but they also can have their own bookmarks. You can create them in the same way as in standard dashboards. The Cognos® Analytics UI differentiates between Shared bookmarks, so all bookmarks from the base dashboards, and My bookmarks, which are bookmarks from the dashboard view.

If you delete the base dashboard, you can't open the dashboard view, and its entry is disabled in the content navigation. All attempts to access that dashboard view by entering its URL address directly into a browser result in an error message. Also, the Source dashboard property appears as Unavailable, for example:

Reporting:

Enhanced clarity of reporting templates view:

Release 12.1.1 enhances the user experience of navigating through report templates.

When you open the Create a report page, it shows only templates that match the Report filter value. This change hides all Active Reports templates by default and makes only the Report templates visible.

You can use the Filter icon to customise your view. To maintain a personalised experience, Cognos® Analytics saves your selection in local storage or by using the cached value.

This enhancement also comes with upgraded filter labels, which reflect the current filter value, for example: Showing All Templates, Showing Report Templates, or Showing Active Report Templates.

Manage queries in the report cache:

You can manage which data queries are included in the report cache to control report performance.

For more information on the report cache, see Caching Prompt Data.

For example, queries to data sources that cannot be accessed by all users, user-dependent, might degrade the report performance.

You can exclude report performance-degrading queries from cached prompt data by setting the value of the Report cache property to No in the query property pane:

-

In the navigation menu, click Report, then Queries in the drop-down menu.

-

In the Queries pane, select a query.

-

In the Properties pane, in the QUERY HINTS section, click the Report cache property.

-

Select one of the following values:

-

Default - the query is included in the report cache

-

Yes - equivalent to the Default value.

-

No - the query is excluded from the report cache.

For multi-level queries, this value is transferred from the lowest-level to the highest-level query.

PostgreSQL audit deployment and model:

The 12.1.1 release comes with a new capability for enhanced auditing and reporting in environments that use PostgreSQL as the auditing database.

You can use a dedicated Framework Manager model and a deployment package to run reports against a PostgreSQL audit database. These resources provide a structure for analysing the audit data and creating insightful reports.

You can access the new samples in the following locations within your installation directory:

<installation>/samples/Audit_samples/Audit_Postgres

<installation>/samples/Audit_samples/IBM_Cognos_Audit_Postgres.zip

To use the PostgreSQL audit samples, make sure to create a data source connection named Audit_PG.

Master detail relationships with 11.1 visualisations:

You can use 11.1 visualisations in master detail relationships to present details for each master query item in a consolidated, insightful way.

For more information on master detail relationships, see Master detail relationships.

For the 11.1 visualisations as the detail objects, you can now choose if the same automatic value range is used in all visualisation instances in a master detail relationship. You apply your choice to the Same range for all instances of the chart option. To turn this option off or on, perform the following steps:

-

Select a visualisation for which a master details relationship is created.

-

In the Data Set pane of this visualisation, click the data item that defines values on the value axis.

-

In the Properties pane, under GENERAL, click the More icon 3 dots in the filter area right of the Value range property.

-

In the Value range window:

-

Select Computed.

-

Turn off or on the Same range for all instances of the chart option, depending on whether you want to use in the instances the global extrema, the biggest value range of all instances, or the local extrema, the value range of each visualisation.

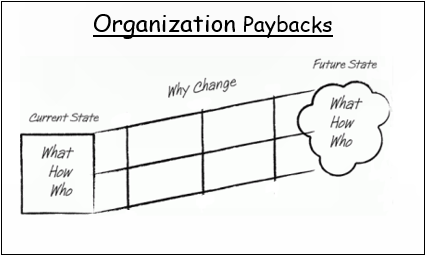

In today’s volatile business landscape, agility is no longer a competitive advantage—it’s a necessity. True agility means moving beyond fast response to actively anticipating market shifts and seamlessly aligning people, processes, and technology to act decisively.

.png?width=595&height=341&name=From%20Insight%20to%20Impact%20(1).png)

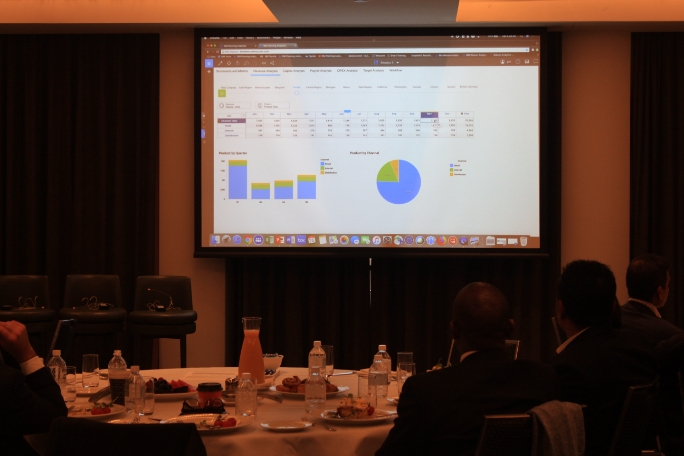

Enterprises possess vast troves of data, yet the ultimate differentiator is the ability to transform that data into actionable insights and automated, intelligent decisions. At Octane Analytics, we are driving this transformation across industries by evolving disconnected reporting tools into a unified, intelligent ecosystem powered by IBM's premier analytics and AI platforms.

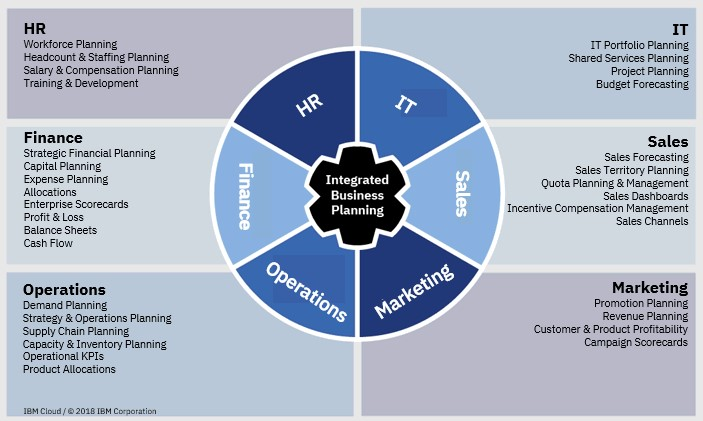

The Unified Framework for Intelligent Decisions

IBM’s comprehensive suite of solutions—including Planning Analytics, Cognos Analytics, SPSS, Decision Optimisation, Controller, and Watsonx Orchestrate—delivers a connected framework that manages business performance from strategic vision through to operational execution. This integration establishes a data-to-decision continuum where insights fluidly integrate into planning, execution, and automation cycles.

- IBM Planning Analytics moves organisations beyond static budgeting to dynamic, driver-based forecasting and scenario modelling.

- IBM Cognos Analytics empowers business users with AI-driven dashboards and visualisation tools for deep insight exploration.

- IBM SPSS integrates statistical precision and data science into business planning, ensuring predictions are rooted in reliable data, not intuition.

- IBM Decision Optimisation models complex business scenarios to identify the most efficient and optimal outcomes.

- IBM Controller simplifies and automates financial consolidation, closing, and regulatory reporting.

- IBM Watsonx Orchestrate enables non-developers to automate repetitive workflows, directly connecting insights to business action without writing code.

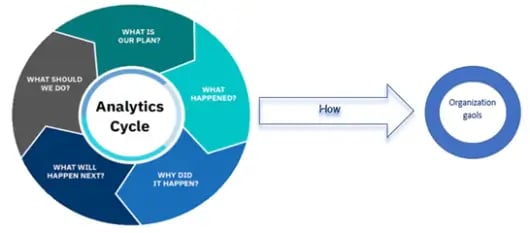

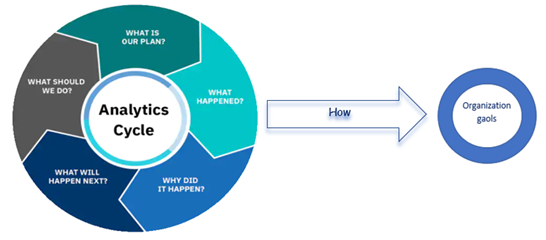

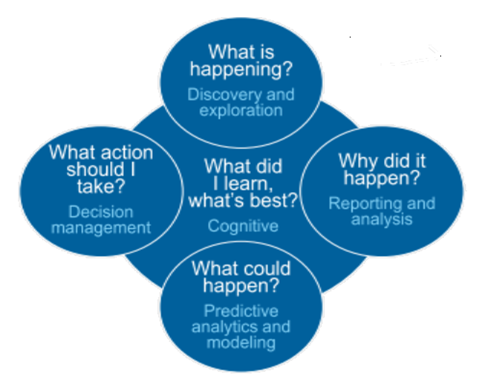

The Pivot to Predictive and Prescriptive Analytics

Many organisations remain reactive, focused on analysing "what happened." The step-change in performance occurs when analytics shift to answering the crucial questions: “what will happen?” (Predictive) and “what should we do about it?” (Prescriptive).

The integrated IBM ecosystem facilitates this critical shift:

-

Prediction Informs Strategy: Predictive models built in SPSS directly inform forecasts within Planning Analytics, making financial and operational plans immediately responsive to market shifts.

-

Prescription Optimises Action: Decision Optimisation identifies the best sequence of actions to achieve a business goal, operating within specified constraints.

-

Automation Operationalises Insight: Watsonx Orchestrate then automates the prescribed follow-up actions—whether triggering workflows in HR, Finance, or Operations—significantly boosting responsiveness and reducing manual workload

This synergy elevates the organisation from merely data-driven to decision-driven, where insights are not just observed but fully operationalised.

AI and Automation: Transforming Finance and Operations

Automation is no longer confined to the IT department. Today, modern CFOs, HR executives, and department leaders are leveraging agentic AI to offload repetitive, high-volume tasks and achieve new levels of efficiency.

Consider the impact across key functions:

- Financial Performance Management: Imagine a Finance Manager who automatically receives consolidated reports prepared by the IBM Controller, reviewed with AI-assisted insights from Cognos Analytics, and validated against dynamic budget forecasts from Planning Analytics.

- Intelligent HR Operations: A People Leader uses Watsonx Orchestrate to streamline repetitive HR tasks—from scheduling interviews and summarising resumes to ensuring records are instantly updated across all ERP systems.

At Octane Analytics, we specialise in designing and deploying these agentic AI ecosystems, ensuring automation amplifies human capability and drives measurable outcomes.

Why Choose Octane Analytics?

As an IBM Gold Partner, Octane Analytics offers deep, specialised expertise in integrating and optimising IBM’s entire performance management stack.

Our approach is centred not just on product deployment, but on measurable business outcomes: enhanced agility in planning, increased accuracy in forecasting, greater efficiency in reporting, and empowerment through automation.

Whether your immediate need is strategic financial consolidation or a full-scale enterprise performance management overhaul, our team provides the expertise to define the roadmap, deliver the integrated solution, and ensure a demonstrable Return on Investment (ROI).

The Future: A Connected, AI-Powered Enterprise

The future of enterprise performance hinges on connected intelligence—an environment where AI and analytics continuously learn, adapt, and act across all business functions.

Organisations that master this integrated, AI-first approach will not only achieve operational efficiency but also build unparalleled resilience and foresight in a rapidly changing global market. At Octane Analytics, we are committed to helping enterprises realise this future, one intelligent decision at a time.

Let’s Build the Intelligent Enterprise Together

If you are exploring how integrated AI, advanced analytics, and automation can significantly elevate your business performance, we invite you to connect with us. Our team can provide tailored, real-world use case demonstrations—from predictive planning to automated workflow execution—all powered by IBM’s market-leading technology.

📩 Reach out to Octane Analytics today to schedule a discovery session.

We are standing at the threshold of the most significant transformation in finance since the invention of the spreadsheet. Artificial Intelligence isn’t a future concept — it’s here — quietly rewriting how finance teams plan, decide, and perform.

And yet, despite the global AI boom, 95% of enterprise AI initiatives fail to deliver measurable impact. Not because the technology falls short, but because most organisations stop too soon. They automate tasks but never redesign the system.

At Octane Solutions, we’ve worked with over 100 finance teams across APAC — and we’ve seen what separates the few that scale from the many that stall. The secret is simple but profound: structure before scale.

From Industrial Revolution to Intelligent Finance

When electricity first arrived in the 19th century, factories did what seemed logical — they replaced gas lamps with electric bulbs. Workplaces became brighter and safer, but not smarter. The true revolution began when they redesigned entire production lines around electric motors, unleashing a new era of efficiency and innovation.

Finance today stands at a similar crossroads.

Chatbots, copilots, and summarisation apps are our lightbulbs — illuminating the potential of AI but not transforming how work gets done. The real breakthrough will come with Agentic AI — a new generation of intelligent systems that reason, coordinate, and act autonomously across the finance ecosystem.

Agentic AI doesn’t just automate; it orchestrates. It doesn’t replace people; it amplifies them. And for the CFO, that means the finance function can finally shift from explaining the past to predicting — and shaping — the future.

The CFO’s Challenge: Insight at the Speed of Business

CFOs today face a dual reality:

- The demand for immediacy: real-time forecasting, continuous scenario analysis, and rolling insights.

- The constraint of legacy: manual reconciliations, fragmented data, and static planning cycles.

Most finance teams have automated fragments of their process — but not the process itself. Reporting is faster, but not necessarily smarter. True transformation happens only when AI becomes part of the fabric of finance — not an add-on.

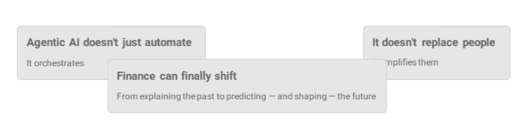

Below are 6 opportunities now emerging as AI evolves from simple LLMs to self-governing, multi-agent ecosystems:

- Conversational analytics and natural-language access to financial data. Finance teams can now ask a question — “What’s our EBITDA variance this quarter?” — and receive a fully contextualised, narrative answer drawn from live systems.

By eliminating manual report preparation, CFOs gain faster clarity and sharper storytelling for stakeholders.

Impact: 80% reduction in analyst report-prep time and faster decision support across FP&A.

- AI that reads, reconciles, and extracts insight from financial documents, PDFs, and spreadsheets.

An airline company uses AI agents linked to Planning Analytics and Excel sources to generate reconciled financial reports in 10 minutes instead of two weeks.

Impact: Continuous visibility into financial performance, replacing static monthly reporting with real-time oversight.

- AI with direct access to corporate databases, enabling dynamic analysis. For a Media company, Octane’s “AskFinance” agent combined data from TM1, Adobe Analytics, and Google Ad Manager to generate contextual financial commentary in seconds.

Impact: 80% reduction in report-generation cost and 98% faster access to narrative insights — AI that doesn’t just calculate but explains why.

- AI that integrates across finance platforms — TM1, SAP, Concur, ERP, and APIs — to coordinate entire workflows. Through Watsonx Orchestrate, CFOs can now automate the entire chain from forecasting to variance reporting: “Generate a cashflow forecast and alert me if OPEX exceeds budget by 5%.” AI handles retrieval, validation, and communication autonomously.

Impact: 99% reduction in manual reporting cycles; faster consolidation, real-time alerts, and seamless cross-system collaboration. - Autonomous decision-making within governed boundaries. AI agents now detect anomalies, recommend journal adjustments, and monitor exceptions before they escalate. This allows finance functions to move from reactive close cycles to proactive exception management.

Impact: Predictive close cycles, risk reduction, and up to 60% ROI in the first 12 months of deployment.

- Safe, transparent, multi-agent ecosystems that manage entire finance functions. Each agent — whether a “forecast bot,” “audit bot,” or “reporting bot” — operates under strict governance, with auditability, explainability, and regulatory alignment. Octane’s enterprise rollout playbook embeds SOC2, GDPR, and financial reporting controls into every workflow.

Impact: Full audit traceability, regulator-ready documentation, and scalable, trusted AI adoption.

The CFO’s now need to realise that their goal is no longer to add another digital assistant, but to build an ecosystem of intelligent, responsible, and explainable agents that make finance self-improving. This shift isn’t about hype or replacing people. It’s about constructing a resilient, data-driven finance engine that learns, adapts, and optimises continuously — from planning to forecasting to audit.

Agentic AI marks the true turning point of the finance— moving beyond automation to orchestration, where decisions are made faster, risks are mitigated earlier, and value is created intelligently.

The 9 Principles Behind Successful AI Transformation

Through 100+ modernisation projects, Octane has distilled nine practices that consistently deliver value:

1. Align AI to Business Impact – Focus on measurable outcomes, not pilots.

2. Build a Finance AI Centre of Excellence – Unite Finance, IT, and Operations under a single vision.

3. Invest in Skills, Not Just Software – Equip people to interpret, question, and guide AI.

4. Adopt Adaptive Governance – Control risk without stifling innovation.

5. Prioritise Data Quality – No AI can outperform bad data.

6. Start with Use Cases – Identify problems before choosing platforms.

7. Automate the Mundane – Free people for creative and strategic work.

8. Measure by Business Outcomes – Look beyond cost savings to agility, accuracy, and trust.

9. Scale Proven Success – Replicate what works across divisions.

Transformation begins with clarity, not complexity.The Foundation of Trust and Scale: IBM

AI’s potential means nothing without trust. That’s why Octane’s partnership with IBM is central to every finance transformation journey.

Built on IBM’s Agentic AI Platform this foundation ensures that CFOs can modernise with confidence — embedding explainability, governance, and measurable ROI from day one. IBM’s Watsonx Orchestrate (Agentic AI Platform) is a key enabler. It uses intelligent digital workers to automate complex workflows, from reconciliations to board-pack creation. With embedded governance, it’s designed to keep humans in control while machines handle the heavy lifting.

Explore: IBM Watsonx Orchestrate →

Agentic AI: From Automation to Orchestration

IBM’s Agentic AI Frameworks mark a shift from tools to systems — from automating tasks to orchestrating end-to-end business outcomes.

They include:

-

Agent Development Lifecycle (ADLC): The governance backbone for building responsible agents.

-

Model Context Protocol (MCP): A transparent standard for open, explainable AI.

-

Hybrid-First Architecture: Ensures flexibility across cloud, on-prem, and edge.

This architecture doesn’t just make AI smarter — it makes it sustainable.

Learn more: Agentic AI Frameworks →

Anthropic + IBM: Responsible AI for Regulated Finance

IBM’s partnership with Anthropic brings the Claude family of models into the Watsonx ecosystem — marrying safety and sophistication.

This enables CFOs to deploy AI assistants that:

- Generate narrative financial reports in natural language.

- Automate forecasting and scenario modelling.

- Support reconciliations and anomaly detection within governed environments.

It’s AI that works like a trusted analyst — intelligent, auditable, and always under your control.

Read more: IBM–Anthropic Partnership →

Groq + IBM: Redefining Speed and Efficiency

AI adoption often stalls on cost and latency. Groq changes that.

By integrating Groq’s high-speed LPU architecture into Watsonx Orchestrate, IBM delivers 5× faster inference and 80% lower compute cost — without compromising security.

This is the infrastructure that turns AI pilots into production systems.

Octane + IBM: The Partnership That Delivers

Octane’s partnership with IBM isn’t symbolic — it’s operational. Our IBM Champions work hand-in-hand with IBM’s product and engineering teams to co-create real-world use cases for finance.

From FP&A to supply chain modelling, Octane helps clients deploy AI-ready cubes, design agentic workflows, and establish continuous improvement frameworks that evolve with business needs.

Our Global support models — Octane Black and Octane Blue — offer CFOs flexible, SLA-backed coverage that reduces total cost of ownership by up to 35%.

AI success isn’t about pilots — it’s about discipline, design, and delivery.

summary, The Cognitive Finance Function- The finance team of the future will not just report performance — it will anticipate, optimise, and advise.

It will be:

- Predictive, not reactive.

- Autonomous, not manual.

- Augmented, not overloaded.

- Connected, not siloed.

- Trusted, not opaque.

This is The Cognitive Finance Function — powered by AI, governed by design, and aligned with enterprise strategy.

What Next?

AI in finance isn’t about technology — it’s about transformation. The winners will be those who move beyond experiments to execution, designing their finance operations for intelligence, not just automation. As we head towards 2026, it's vital that your finance strategic plans are set and able to be easily communicated for impact.

Octane and IBM are helping CFOs make that leap — securely, measurably, and fast.

Book a strategy session with Octane to explore your AI-in-Finance roadmap and we’ll walk you through the below practical Roadmap to Building an Intelligent Finance Function

Sign up for a 30-day free

Diagnose — Identify Where AI Can Create Meaningful Impact

Design — Combine Orchestration with Human Judgment

Deploy — Start Small, Prove Fast, Scale Wisely

Demonstrate — Quantify ROI and Institutionalise Learnings

Differentiate — Make Finance the Intelligent Core of the Enterprise

Final Thought: From Lightbulb to Lighthouse

The future of finance belongs to leaders who don’t just turn on AI — they design for it. CFOs who embed intelligence, governance, and agility into their finance DNA will redefine how value is created and measured

In a world where AI is becoming smarter and faster, one constant challenge remains: trust.

We all want better forecasts, more accurate models, and faster insights. But as we scale machine learning and predictive analytics across the enterprise, a critical question emerges, Can we explain the results?

That’s where IBM SPSS is quietly making a big impact in 2025.

Why SPSS is still a game-changer

You might think of SPSS as a tool for academic stats or survey analysis. However, its transformation in recent years positions it as a core enabler of responsible AI in businesses and government.

.png?width=499&height=286&name=Agentic%20AI%20blog%20(1).png)

With capabilities like:

-

Automated machine learning (AutoML) in SPSS Modeller,

-

Bias detection and explainability tools,

-

Seamless integration with Python and open-source libraries,

SPSS offers something that most modern AI platforms don’t: transparency without complexity.

Responsible AI isn't just a Buzzword

In industries like finance, healthcare, and the public sector, it’s not enough for a model to be accurate; it needs to be explainable, auditable, and compliant.

SPSS’s visual interface and guided modelling help non-programmers build powerful models with confidence, while its ability to export models as Python code or PMML supports enterprise deployment at scale.

It bridges the gap between business users, data scientists, and compliance teams, a rare feat in today’s fractured AI tool ecosystem.

What’s hot in SPSS right now?

-

AutoAI + SPSS Modeler

IBM’s AutoAI capabilities are now extending into SPSS workflows. Users can automatically select the best algorithms, tune hyperparameters, and test pipelines, without writing a single line of code. -

Ethical Forecasting in the Public Sector

Government agencies are using SPSS to model outcomes for policy changes, ensuring algorithms are fair and decisions are backed by explainable data. -

Citizen Data Science

SPSS is a leading tool in the “citizen data scientist” movement, empowering finance teams, marketing analysts, and HR professionals to run predictive models without relying solely on IT.

A quick real-life use case

A large healthcare provider recently used SPSS Modeler to predict patient readmission risks. The team was able to build, test, and deploy a model within weeks, with complete traceability and audit logs to satisfy HIPAA and internal governance requirements.

Looking Ahead: The Future of SPSS in AI

The buzz in 2025 is all about hybrid AI, the blending of traditional statistical models with generative AI and LLMs. SPSS, with its deep roots in statistics and newer integrations with Watson and Python, is perfectly positioned to lead this evolution.

Whether you're an analyst, a data science leader, or a decision-maker exploring AI, don’t sleep on SPSS. It’s more relevant than ever.

💡 Are you still thinking of SPSS as “just” a stats tool? Let’s talk. I’d love to hear how you're applying predictive analytics in your organisation, or explore where SPSS could fit into your journey to responsible AI.

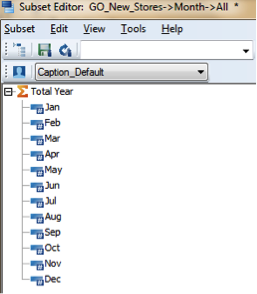

In this blog post, I aim to demonstrate a simple yet effective technique to display the members returned by a set as a list in Excel. This serves as a quick and efficient method for analysing the results from your static or MDX-based sets.

.gif?width=559&height=320&name=TM1%20newsletter%20(1).gif)

This approach offers an alternative to using the TM1ELLIST function in Planning Analytics for Excel (PAfE), which also returns an array of members based on MDX expression or a set. However, the TM1ELLIST function requires the user to manually enter the formula to view the results and it does not support hierarchies.

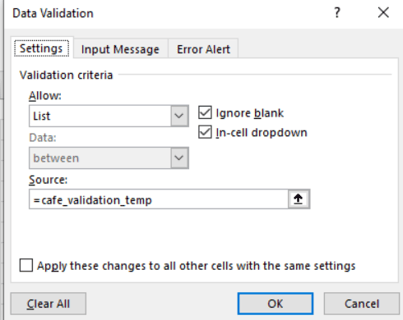

When you add a SUBNM or TM1Set function in PAfE, you may have an automatic addition of data validation to the cell, allowing for selection from a dropdown list.

Upon navigating to the Data Validation option in Excel, you will discover a hidden named range called “cafe_validation_temp” added under the “Source” field.

This is a generic named range that is applied to all cells containing SUBNM and TM1SET functions.

The key to this technique is to reference the “cafe_validation_temp” named range in a cell that triggers automatic display of members as a spilled list in Dynamic Array compatible excel worksheets.

Doing so will automatically display the members of the active cell that contains SUBNM or TM1SET functions. As you select a different cell containing these functions, the list will automatically update to show the members returned from those functions.

Furthermore, as TM1SET is hierarchy-aware, it seamlessly returns results from hierarchies, enhancing the flexibility and depth of analysis.

For training and support please feel free to email media@octanesolutions.com.au

In the realm of digital transformation, the concept of digital labor has emerged as a game-changer for businesses seeking efficiency, agility, and innovation. IBM WatsonsX Orchestrate, a powerhouse in the AI and data orchestration space, takes center stage in this digital evolution. This blog explores the pivotal role played by WatsonsX Orchestrate in reshaping digital labor and how it empowers organizations to harness the full potential of artificial intelligence (AI) and data science.

Orchestrate allows you to add and train new automations from a variety of sources, enabling users to easily work across existing systems using a single UI.

.png?width=567&height=302&name=fiji%20blog%20(1).png)

Understanding Digital Labor:

Digital labor refers to the use of digital technologies, including AI, automation, and robotics, to augment or replace human tasks and processes. It's a paradigm shift in how work is done, leveraging technology to enhance productivity, reduce errors, and enable humans to focus on more strategic and creative aspects of their roles.

"Companies that effectively apply intelligent automation across the enterprise expect to outshine peers in profitability, revenue growth, and efficiency over the next 3 years."

IBM WatsonsX Orchestrate and Digital Labor:

-

Workflow Automation for Operational Efficiency: One of the key pillars of digital labor is workflow automation, and WatsonsX Orchestrate excels in this domain. By automating intricate AI and data science workflows, the platform significantly reduces manual effort, streamlining processes and enhancing operational efficiency. This allows organizations to accomplish more with less, freeing up human resources for high-value tasks.

-

Collaboration for Enhanced Productivity: Digital labor is not about replacing human workers but augmenting their capabilities. WatsonsX Orchestrate fosters collaboration among cross-functional teams, bringing together data scientists, developers, and domain experts. This collaborative environment accelerates problem-solving, decision-making, and innovation, creating a synergistic relationship between digital labor and human expertise.

-

Scalability to Meet Growing Demands: As organizations scale their digital labor initiatives, scalability becomes a critical factor. WatsonsX Orchestrate provides the flexibility to scale horizontally and vertically, ensuring that the platform can seamlessly adapt to the growing demands of AI and data science projects. This scalability is essential for organizations aiming to expand their digital labor capabilities without compromising performance.

-

Model Monitoring and Management for Continuous Improvement: In the era of digital labor, continuous improvement is paramount. WatsonsX Orchestrate includes robust tools for monitoring and managing AI models in production. This ensures that digital labor processes based on AI models deliver consistent and reliable results over time. The platform's capabilities contribute to the iterative refinement of digital labor processes, optimizing outcomes and enhancing overall performance.

-

AI Explainability and Ethical Digital Labor: Transparent digital labor practices are crucial for building trust and ensuring ethical use of AI. WatsonsX Orchestrate provides tools for explaining AI model decisions, addressing the interpretability challenge often associated with complex AI systems. Additionally, the platform includes features for detecting biases, aligning digital labor practices with ethical standards and promoting fairness in decision-making.

There are more than 2,000 activities that make up 800 full-time occupations that are part of knowledge work. However, only 5% of these full-time occupations could be fully automated using existing technology. That means that the 95% of remaining occupations require cognitive abilities.

Benefits for Businesses:

-

Accelerated Time-to-Value: By automating and streamlining AI and data science workflows, organizations can significantly reduce the time it takes to move from ideation to deployment, ultimately accelerating their time-to-value for AI initiatives.

-

Improved Collaboration: The collaborative features of WatsonsX Orchestrate facilitate better communication and knowledge sharing among teams, leading to more effective and impactful AI solutions.

-

Enhanced Governance and Compliance: The platform provides robust governance and compliance features, ensuring that organizations can meet regulatory requirements and maintain a high standard of data ethics.

-

Cost-Efficiency: With the ability to scale and the flexibility of deployment options, WatsonsX Orchestrate helps organizations optimize costs by aligning infrastructure with project requirements.

In the era of AI and data-driven decision-making, IBM WatsonsX Orchestrate stands out as a powerful solution for organizations looking to harness the full potential of their AI and data science initiatives. With its automation capabilities, collaborative environment, and emphasis on ethical AI, WatsonsX Orchestrate is poised to become a key player in the journey towards building intelligent, transparent, and scalable AI solutions. As businesses continue to navigate the complexities of the digital age, platforms like WatsonsX Orchestrate provide the tools needed to turn data into a strategic asset and drive innovation in the ever-evolving landscape of AI.

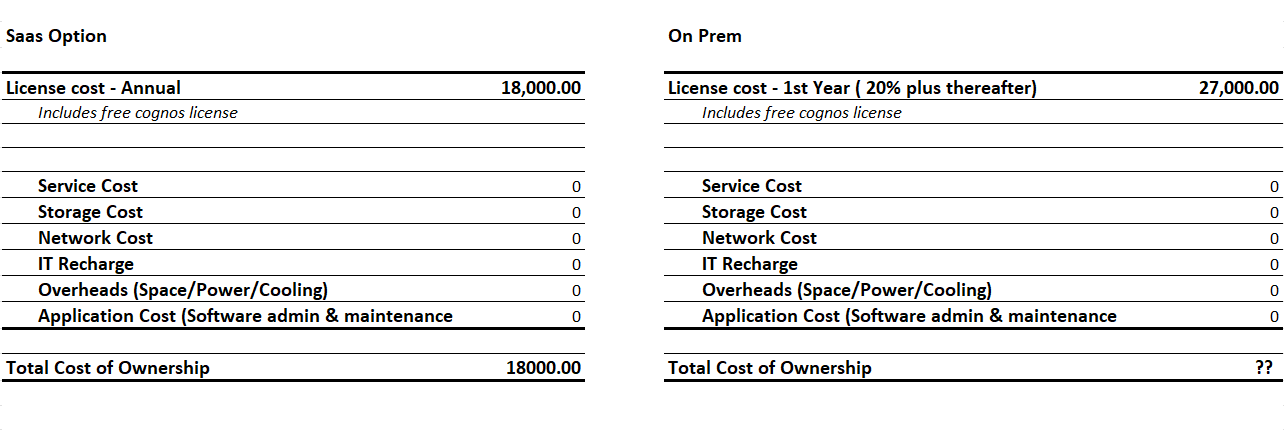

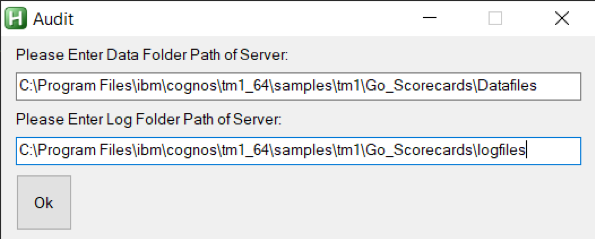

In today's digital age, businesses are constantly seeking ways to optimize their data management processes. One solution that has gained significant popularity is the migration of planning analytics to the cloud. This strategic move offers several advantages that businesses can leverage to enhance their data management capabilities.

.png?width=426&height=323&name=social%20post%20(1440%20%C3%97%201080%20px).png)

Why Cloud?

One of the primary reasons why companies are making the switch to cloud-based planning analytics is the instant global presence it provides. By storing data in data centers located around the world, organizations can ensure that their data is accessible to users whenever and wherever it's needed.

Cost Considerations

-

Server Costs – Hardware, Software, and Administration

-

Storage Costs – Hardware and Administration

-

Network Costs – Hardware and Administration

-

IT Labor Costs – Server and Virtualization Administration

-

Overhead Costs – Space, Power, Cooling

-

Application Costs – Software maintenance, Administration

Business Considerations

-

Faster Go-To market – Ever-changing and ever-evolving business conditions

-

Scalability – Up & Down

-

Flexibility – address seasonality, tests, short term demands

-

Focus – On business and core competence rather than IT.

This not only reduces latency but also enables quick decision-making, especially in time-sensitive situations. Additionally, having a global presence opens up new revenue streams as companies can cater to customers from all over the world.

Another key benefit of migrating planning analytics to the cloud is the high performance and reliability it offers. Cloud infrastructure can be tailored to meet the specific needs of users, ensuring optimal performance and improved security.

While multi-tenant cloud environments are commonly available, opting for single-tenant cloud environments can further enhance performance and security.

The instant deployment capability of cloud services is also a major advantage for businesses. Cloud providers offer pre-configured services, minimizing the setup time required. This allows organizations to provision services immediately upon order, enabling them to build powerful applications and deliver them securely via web, mobile, or desktop.

The agility of cloud-based deployment also eliminates the need for complex IT infrastructures and support, streamlining the overall process.

Migrate

.png?width=504&height=284&name=social%20post%20(3).png)

Scalability is another crucial factor driving the migration of planning analytics to the cloud. Businesses need a platform that can easily scale as they grow. Cloud customers can add more users, storage, and features as their requirements evolve.

This flexibility enables companies to pay for only what they need, avoiding unnecessary expenses on software and hardware. Moreover, cloud users can scale up temporarily when there is a spike in usage and then scale back down, further optimizing costs.

Disclaimer: The pricing may vary. This is only an example of how the computation will look on the actual

Security is a top concern for all businesses, and the cloud offers robust security features to address this. Cloud providers stay up-to-date with industry standards and compliance certifications to ensure data security. Single-tenant cloud environments provide an additional layer of security, with only one point of access and no risk of data being combined or accessed by unauthorized users. This level of security minimizes the chances of data breaches, which could have severe consequences for any organization.

Pitfalls – Be Aware! Key Considerations

-

All clouds are not equal – evaluate Public, Private, Hybrid, and associated unique strengths.

-

All-in in one go – Determine applications best suited for migration, associated risks, and timelines.

-

Don’t leave Om-Premise behind – It’s key to tune your on-premise applications for cloud utilization.

-

Hands-of Outsourcing – While it’s on the cloud, lacking in-house expertise can cost dearly and result in project failures.

-

Keep a Scorecard – evaluate all workload's economic, security, and risk profiles. Keep a tab.

-

Move beyond lift & shift – using the cloud shouldn’t only be about cheap hardware and storage.

One of the most compelling reasons to migrate planning analytics to the cloud is the cost savings it offers. By eliminating capital expenses for infrastructure, such as servers and storage, businesses can save significant amounts of money. Maintenance and upgrades associated with physical storage are also eliminated, freeing up resources for other strategic initiatives. With the pay-as-you-go model, companies only pay for the storage and features that are actively used, ensuring cost optimization.

Run

.png?width=520&height=293&name=social%20post%20(4).png)

In conclusion, migrating planning analytics to the cloud provides businesses with a range of benefits, including global accessibility, high performance, instant deployment, scalability, enhanced security, and cost savings. This strategic move equips organizations with the agility and capabilities required to thrive in today's digital landscape.

By embracing the cloud, businesses can not only meet the changing demands of employees for mobile working and remote access but also gain a competitive edge over their peers who rely on legacy on-premises storage solutions.

Octane Fixed Price Upgrade – A$19,950

Be in the cloud in 14 days

Get IBM Planning Analytics (TM1) and its associated applications Workspace and the Microsoft Excel interface on the cloud.

We perform a like-for-like migration of data from TM1 perspectives to TM1 cloud including integration of data sources and setting up licenses and user access. We include 4 hours of user training to ensure users can play and use TM1 properly. Book a free discovery call

|

Like for like migration Unlimited Cubes Unlimited dimensions Unlimited Hierarchies |

20 Excel reports Up to 2 TM1 Instances Up to 3 environments Use propriety Octane checklist |

Gone days, where we had no control/alerts mechanism on the TM1 database, CPU/memory it consumes, react at the nick of the moment before TM1 server crashes.

I am sure all TM1 lovers, administrators and business users who had these experiences in the past would connect to what I am referring to. For all new Planning Analytics users, in earlier versions of TM1/ Planning Analytics, we had little overview on how much on RAM/ memory can a TM1 instance use/utilize or have an inbuilt alter mechanism.

TM1 Database Alert Mechanism:

Issue:

Most of you know, TM1 Server loves memory/RAM, more memory available the better performance/processing you get. Due to the trade-off between the cost and memory availability, there has always been a cap on upper limit on RAM available to TM1 Server.

What is new:

We now have an inbuilt mechanism in Planning Analytics Workspace, wherein we can set certain configuration and look for alters at a different level.

Administrators can now set, database threshold and alter configurations in a single tab on the Database settings page for the individual database in Planning Analytics Administration.

Isn’t that the good news! To use this, Planning Analytics Workspace version must be 2.0.46 or higher. In the previous version of Planning Analytics Administration, it was not possible to apply unique settings for each database, thresholds and alerts were set on separate tabs of a configuration page, but settings were applied to all databases in the environment.

Navigation:

For database settings, go to the Administration page, click Database as shown below.

Click on settings (highlighted), Database Setting, move to Thresholds and alerts.

The administrator can enter values for Warning threshold and Critical threshold and enable alert as different resource usages, as shown below.

The administrator can also set thread auto-refresh time interval.

Wonderful, now you can implement these in your environment, any doubts – we are here to help you for sure! Contact us today to find out how we can help you leverage your data for true business intelligence.

You may also like reading “ Predictive & Prescriptive-Analytics ” , “ Business-intelligence vs Business-Analytics ” ,“ What is IBM Planning Analytics Local ” , “IBM TM1 10.2 vs IBM Planning Analytics”, “Little known TM1 Feature - Ad hoc Consolidations”, “IBM PA Workspace Installation & Benefits for Windows 2016”.

Octane Software Solutions Pty Ltd is an IBM Registered Business Partner specialising in Corporate Performance Management and Business Intelligence. We provide our clients with advice on best practices and help scale up applications to optimise their return on investment. Our key services include Consulting, Delivery, Support and Training. Octane has its head office in Sydney, Australia as well as offices in Canberra, Bangalore, Gurgaon, Mumbai, and Hyderabad.

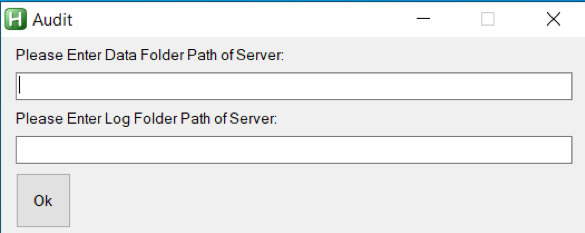

This blog explains a few TM1 tasks which can be automated using AutoHotKey. For those who don't already know, AutoHotKey is open-source scripting language used for automation.

1. Run TM1 Process history from TM1 Serverlog :

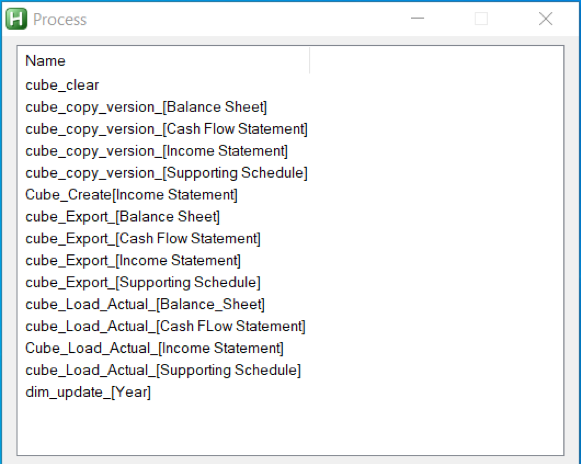

With the help of AutoHotKey, we can read each line of a text file by using loop function and content is stored automatically in an in-built variable of function. We can also read filenames inside a folder using same function and again filenames will be stored in an in-built variable. Therefore, by making use of this we can extract Tm1 process information from Tm1 Serverlog and display the extracted information in a GUI. Let’s go through the output of an AutoHotKey script which gives details of process run.1.

- Below is the Screenshot of output when script is executed. Here we need to give log folder and data folder a path.

- After giving the details and clicking OK, list of processes in the data folder of Server is displayed in GUI.

- Once list of processes are displayed, double-click on process to get process run history. In below screenshot we can see Status, Date, Time, Average Time of process, error message and username who has executed the process. Thereby showing TM1 process history in TM1 server log.

2. Opening TM1Top after updating tm1top.ini file and killing a process thread

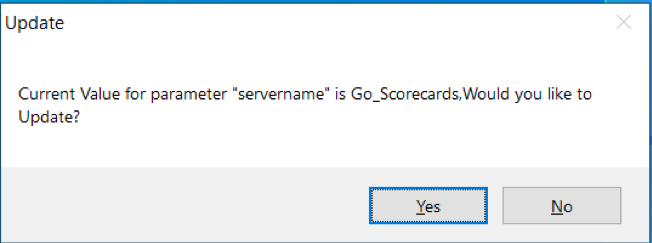

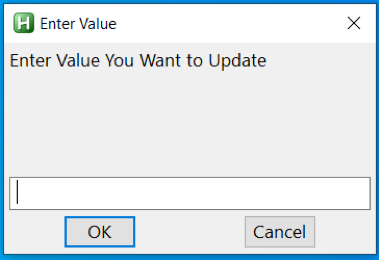

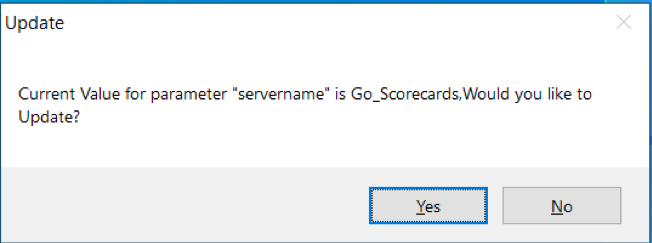

With the help of same loop function which we had used earlier, we can read tm1top.ini file and update it using fileappend function in AutoHotKey. Let’s again go through the output of an AutoHotKey script which will open Tm1top.

- When script is executed, below screen comes up which will ask whether to update adminhost parameter of tm1top.ini file or not.

- Clicking “Yes”, new screen comes up where new adminhost is required to be entered.

- After entering value, new screen will ask whether to update servername parameter of tm1top.ini file or not.

- Clicking “Yes”, new screen comes up where new servername is required to be entered.

- After entering a value, Tm1Top is displayed. For verifying access, username and password is required

- Once access is verified, just enter the thread id which needs to be cancelled or killed.

IBM has identified a defect within the code introduced in TM1 10.2.2 Fixpack 7, part of all other releases before 2.0.9. This defect causes data loss within the cubes even after performing SaveDataAll activity with in TM1 server. Let us get into the details.

What is the defect :

Possibility of losing data even after SaveDataAll activity is performed. This defect (APAR PH19984) has been identified recently by IBM. This will only trigger when below conditions are met.

- No-SaveDataAll : If SavedataAll not performed since TM1 Server was rebooted.

- Lock Contention : Lock contention specific to public subset, TI process or chore.

- Rollback : SavedDataAll thread rollback due to lock contention.

- ServerRestart : TM1 server restarts following above mentioned points.

How to Find:

To find if TM1 Server might encounter this issue, pls follow below steps.

- If not already enabled, enabled debug options in tm1s-log.properties.

TM1.Lock.Exception=DEBUG

TM1.SaveDataAll=DEBUG - Identify SaveDataAll thread, look for “Starting SaveDataAll” in tm1server.log.

- Check if lock contention rollback on SaveDataAll has been triggered in tm1server.log. Look for “CommitActionLogRollback: Called for thread ‘xxxxx’”, check if xxxxx is SaveDataAll thread.

- If “CommitActionLogRollback: Called for thread ‘xxxxx’” is found before ‘Leaving SaveDataAll critical section’ – there is high change you are prone to this defect and might cause data loss.

Impacted Users :

All Clients using Planning Analytics On-Cloud and On-Premise (Local) TM1 Server versions 10.2.2 Fix pack 7 and PA version 2.0 till 2.0.9.

How to avoid :

This can be avoided in two ways.

- Automate SaveDataAll ( Best practice) to happen at regular intervals, else do this manually.

- For PA Local users, Apply fix released by IBM on 17th December 2019, click here for more details.

Octane Software Solutions is a IBM Gold Business Partner, specialising in TM1, Planning Analytics, Planning Analytics Workspace and Cognos Analytics, Descriptive, Predictive and Prescriptive Analytics.

You may also like reading “ What is IBM Planning Analytics Local ” , “IBM TM1 10.2 vs IBM Planning Analytics”, “Little known TM1 Feature - Ad hoc Consolidations”, “IBM PA Workspace Installation & Benefits for Windows 2016”, PA+ PAW+ PAX (Version Conformance), IBM Planning Analytics for Excel: Bug and its Fix , Adding customizations to Planning Analytics Workspace

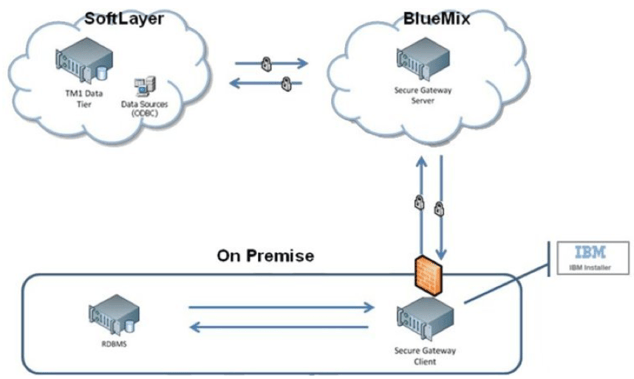

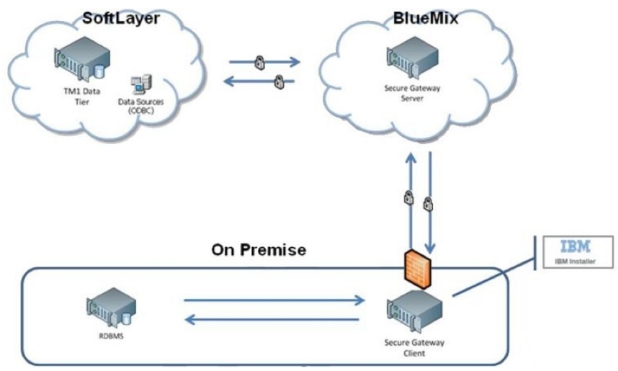

Before you read further, please note, this blog details secure Gateway connection used for Planning Analytics deployed “on-cloud” Software as a Service (SaaS) offering.

This blog details steps on how to renew secure gateway Token, either before or after the Token has expired.

What is IBM Secure Gateway:

IBM Secure Gateway for IBM Cloud service provides a quick, easy and secure solution for establishing link between Planning Analytics on cloud and a data source; Typically, an RDBMS source for example IBM DB2, Oracle database, SQL server, Teradata etc. Data source/s can reside either “on-premise” or “on-cloud”.

Secure and Persistent Connection:

By deploying this light-weight and natively installed Secure Gateway Client, a secure, persistent connection can be established between your environment and cloud. This allows your Planning Analytics modules to interact seamlessly and securely with on-premises data sources.

How to Create IBM Secure Gateway:

Click on Create-Secure-Gateway and follow steps to create connection.

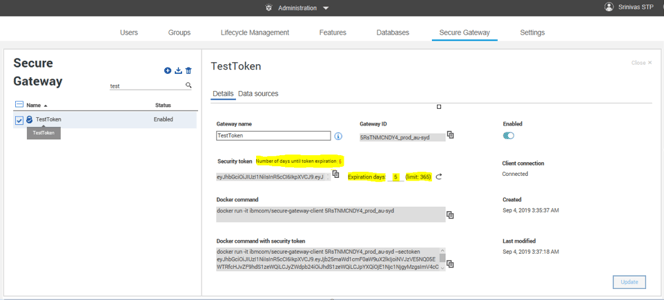

Secure Gateway Token Expiry:

If the Token has expired, Planning Analytics Models on cloud cannot connect to source systems.

How to Renew Token:

Follow below steps to renew secure gateway token.

- Navigate to the Secure Gateway

- Click on the Secure Gateway connection for which the token has expired.

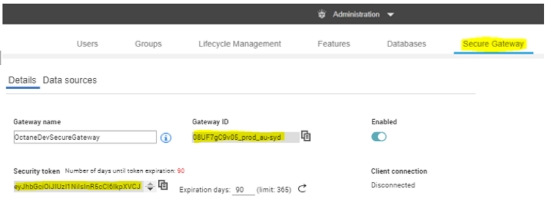

- Go to Details as shown below and enter number 365 (max limit) beside Expiration days. Here 365 or a year is the maximum time after which the token will expire again. Once done click update.

This should reactivate your token, TIs should now interact with source system.

You may also like reading “ Predictive & Prescriptive-Analytics ” , “ Business-intelligence vs Business-Analytics ” ,“ What is IBM Planning Analytics Local ” , “IBM TM1 10.2 vs IBM Planning Analytics”, “Little known TM1 Feature - Ad hoc Consolidations”, “IBM PA Workspace Installation & Benefits for Windows 2016”

Since the launch of Planning Analytics few years back, IBM has been recommending its users to move to Planning Analytics for Excel (PAX) from TM1 Perspective and TM1 Web. As every day new users migrate to adopt PAX, it’s prudent that I share my experiences.

This blog will be part of a series where I would try to highlight and make users aware of different aspects of this migration. This one specifically details a bug I encountered during one of the projects in which our Clients was using PAX and steps taken to mitigate the issue.

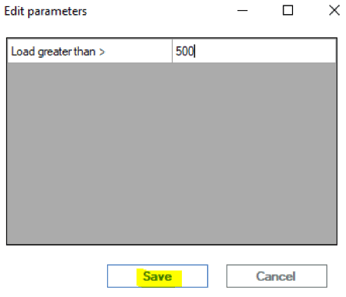

What was the problem:

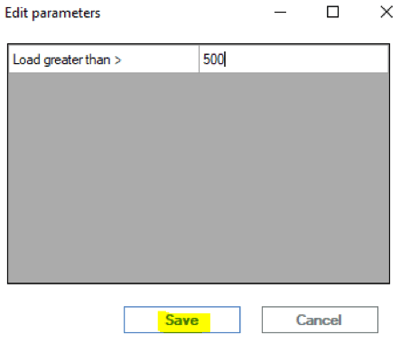

Scenario: when a Planning Analytics User triggers a process from Navigation Pane within PAX and uses “Edit parameters” option to enter value for a numeric parameter and clicks save to runs the process.

Issue: when done this way, the process won’t complete and fail. However, instead if this was run using other tools like Architect, Perspective or TM1 Web, the process would complete successfully.

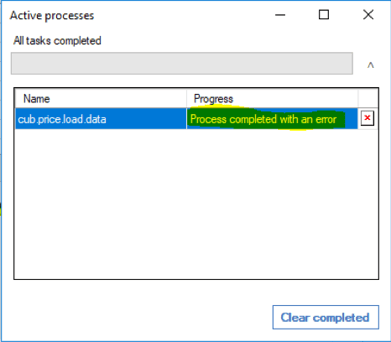

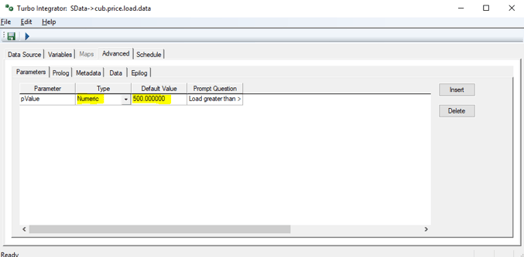

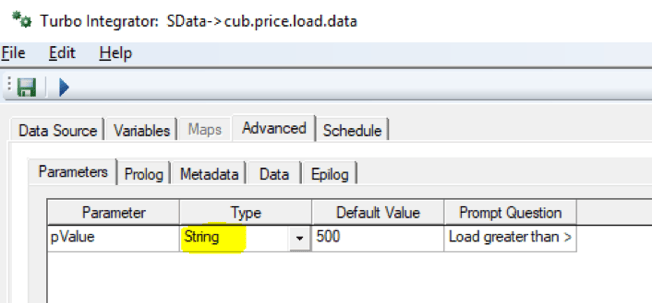

For example, let’s assume a process, cub.price.load.data takes a number value as input to load data. User clicks on Edit Parameter to enter value and saves it to run. The process fails. Refer screenshots attached.

Using PAX.

Using Perspective

What’s causing this:

During our analysis, it was found that while using PAX, when users click on Edit parameter,enter value against the numeric parameter and save it, in the backend the numeric parameter was getting converted into a String parameter thereby modifying the TI process.

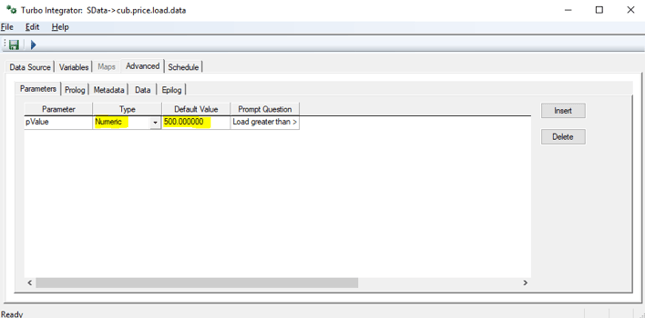

As the TI was designed and developed to handle a numeric variable and not a string, a change in type of the variable from Numeric to String was causing the failure. Refer screenshots below.

When created,

Once saved,

What’s the fix?

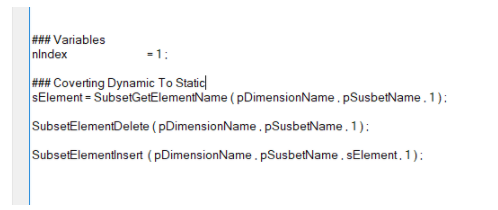

Section below illustrates how we mitigated & remediated this bug.

For all TI’s using numeric parameter.

- List down all TI’s using numeric type in Parameter.

- Convert the “Type” of these parameters to String and rename the parameter to identify itself as string variable (best practice). In the earlier example, I called it pValue while holding numeric and psValue for String.

- Next, within the TI in Prolog, add extra code to convert the value within this parameter back to same old numeric variable. Example, pValue =Numbr(psValue);

- This should fix the issue.

Note that while there are many different ways to handle this issue, it best suited our purpose and the project. Especially considering the time and effort it would require to modify all effected processes.

Planning Analytics for Excel : Versions effected

Latest available version (as of 22ndOctober 2019) is 2.0.46 released on 13thSeptember 2019. Before publishing this blog, we spent good time in testing this bug on all available PAX versions. It exists in all Planning Analytics for Excel versions till 2.0.46.

Permanent fix by IBM:

This has been highlighted to IBM and explained the severity of this issue. We believe this will be fixed in next version of Planning Analytics for Excel release. As per IBM (refer image below), seems fix is part of the upcoming version 2.0.47.

You may also like reading “ Predictive & Prescriptive-Analytics ” , “ Business-intelligence vs Business-Analytics ” ,“ What is IBM Planning Analytics Local ” , “IBM TM1 10.2 vs IBM Planning Analytics”, “Little known TM1 Feature - Ad hoc Consolidations”, “IBM PA Workspace Installation & Benefits for Windows 2016”.

This blog broaches all steps on how to install IBM Secure Gateway Client.

IBM Secure Gateway Client installation is one of the crucial steps towards setting up secure gateway connection between Planning Analytics Workspace (On-Cloud) and RDBMS (relational database) on-premise or on-cloud.

What is IBM Secure Gateway :

IBM Secure Gateway for IBM Cloud service provides a quick, easy, and secure solution establishing a link between Planning Analytics on cloud and a data source. Data source can reside on an “on-premise” network or on “cloud”. Data sources like RDBMS, for example IBM DB2, Oracle database, SQL server, Teradata etc.

Secure and Persistent Connection :

A Secure Gateway, useful in importing data into TM1 and drill through capability, must be created using TurboIntegrator to access RDBMS data sources on-premise.

By deploying the light-weight and natively installed Secure Gateway Client, a secure, persistent and seamless connection can be established between your on-premises data environment and cloud.

The Process:

This is two-step process,

- Create Data source connection in Planning Analytics Workspace.

- Download and Install IBM Secure Gateway

To download IBM Secure Gateway Client.

- Login to Workspace ( On-Cloud)

- Navigate to Administrator -> Secure Gate

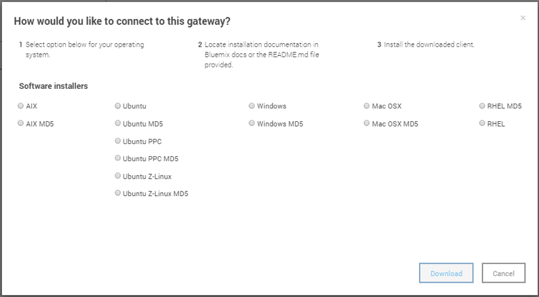

Click on icon as shown below, this will prompt a pop up. One needs to select operating system and follow steps to install the client.

Once you click, a new pop-up with come up where you are required to select the operating system where you want to install this client.

Choose the appropriate option and click download.

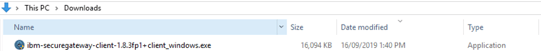

If the download is defaulted to download folders you will find the software in Download folder like below.

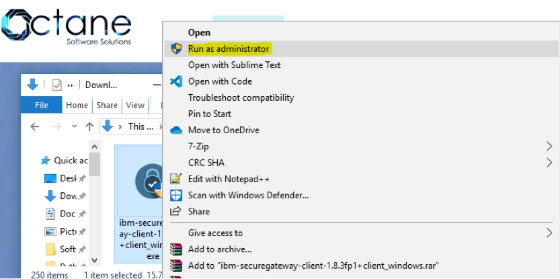

Installation IBM Secure Gateway Client:

To Install this tool, right click and run as administrator.

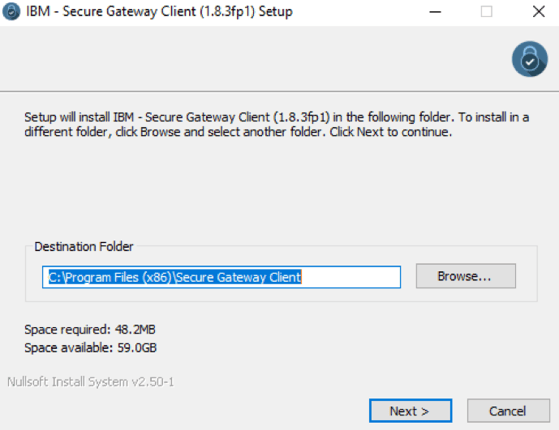

Keep the default settings for Destination folder and Language, unless you need to modify.

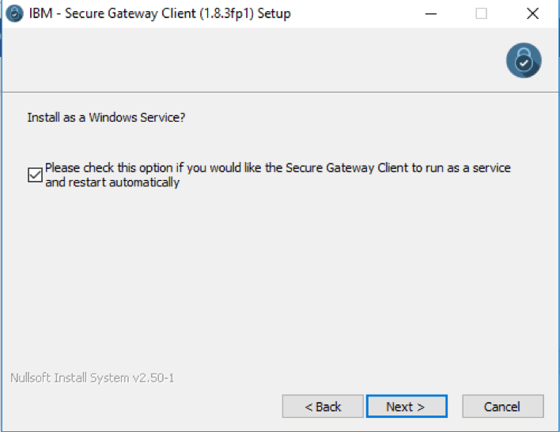

Check box below if you want this as Window Service.

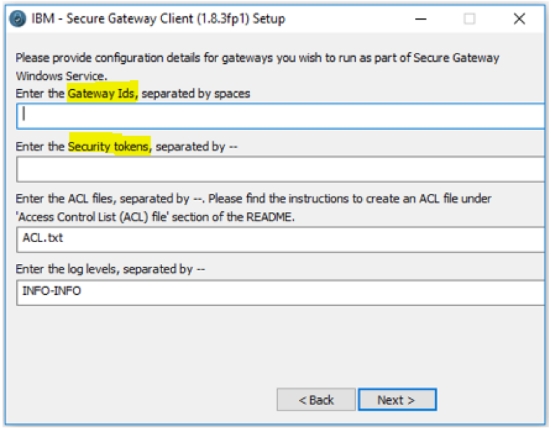

Now this is an important step, we are required to enter Gateway ids and security tokens to establish a secured connection. These needs to be copied over from Secure connection created earlier in Planning Analytics Workspace ( refer 1. Create Data source connection in workspace).

Figure below illustrates Workspace, shared details on Gateway ID and Security Token, these needs to be copied and pasted in Secure Gateway Client (refer above illustration).

If user chooses to launch the client with connection to multiple gateways, one needs to take care while providing the configuration values.

- The gateway ids need to be separated by spaces.

- The security tokens, acl files and log levels should to be delimited by --.

- If you don't want to provide any of these three values for a particular gateway, please use 'none'.

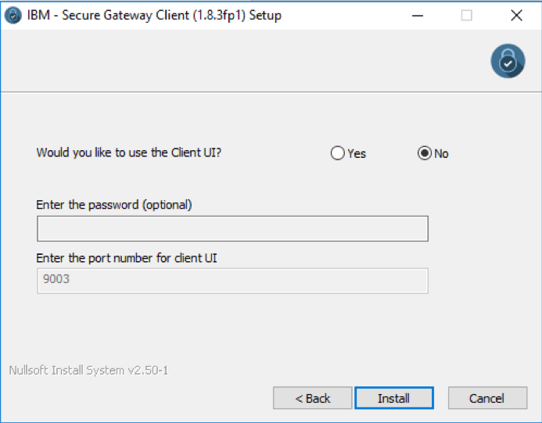

- If you want Client UI you may choose else select No.

Note: Please ensure that there are no residual white spaces.

Now click Install, once this installation completes successfully, the IBM Secure Gateway Client is ready for use.

This Connection is now ready, Planning Analytics can now connect to data source residing on-premise or any other cloud infrastructure where IBM Secure Gateway client is installed.

You may also like reading “ Predictive & Prescriptive-Analytics ” , “ Business-intelligence vs Business-Analytics ” ,“ What is IBM Planning Analytics Local ” , “IBM TM1 10.2 vs IBM Planning Analytics”, “Little known TM1 Feature - Ad hoc Consolidations”, “IBM PA Workspace Installation & Benefits for Windows 2016”.

As you plan to adopt IBM Planning Analytics cloud, it’s important to understand what it takes. This blog highlights areas you will be involved-in when you upgrade from on-premise TM1 10.x.x to Planning Analytics on Cloud.

The good thing about cloud is that it comes with TM1/PA and all of its components like Planning Analytics Workspace, TM1 Web installed and configured. Meaning lesser effort. Also, all future release upgrades are taken care by IBM keeping you up to date with the latest and greatest.

So let’s quickly look at the steps as you set yourself up:

- Welcome Kit

Once the cloud servers are provisioned, you will receive a welcome kit which will have all the details related to DEV and PROD cloud environments.

This document will have things like RDP credentials, Shared folder Credentials and links for TM1 Web, Workspace and Operation Console

Note:IBM offers its clients a choice of choosing a Domain name for both production and Development. For example, http://abcdprod.planning-analytics.ibmcloud.com/and http://abcddev.planning-analytics.ibmcloud.com/

A single blank TM1 instance with the name TM1 is setup initially when the cloud server is provisioned.

- Secure Gateway

Create a secure gateway to establish a connection between your on-cloud planning analytics environment and your on-premises data sources. And then add a data source to a secure gateway. You will also would need to install secure gateway client and test the connection.

- Support Site

Register with IBM support site to raise and monitor tickets. This is a very important step as all queries related to cloud environment including creating a new instance would require a ticket to be raised.

- FTP Client

Planning Analytics on Cloud includes a dedicated shared folder for storing and transferring files. You can copy files between your local computer or shared directory within your company network and the Planning Analytics cloud shared folder with a FTPS application like FileZilla.

Download, install and configure FileZilla (free FTP solution) on users’ machines, so that the users can copy and download files from planning analytics on cloud shared folder

If you have the shared path mentioned in the Sys Info cube then update the path. Or if you have hard coded the paths in the TI then I would recommend to clean up the Tis by pointing to the path mentioned in the Sys info cube.

- Planning Analytics for Excel (PAX)

PAX is the new add-in, it replaces perspectives used on-premises.

Download, Install and configure PAX on users’ machine.

Note:Schedule for a PAX training before asking users to test cubes, dimensions, reports and data reconciliation activities. This, as PAX comes with new ways of doing things which require but of hand holding initially.

- Upgrade perspectives action buttons

Action buttons used in TM1 10.x.x needs to be upgraded to be used in Planning Analytics for Excel.

Note:Once excel report / template are upgraded, it will no longer work in perspectives. Essential to take backups of all excel reports before performing this task

In Summary:

- Have a test plan to validate all the objects including security, reports and performance of TIs.

- Take this opportunity to clean up data folder, redundant objects and cube optimisation.

- Have a training plan in place as new features are added to PAX and PAW very frequently.

- Keep an eye on what is new. Below are the links for PAX and PAW updates

We at Octane have vast & varied experience in migrating on-premise TM1 10.x.x to Planning Analytics on cloud.

Contact us at info@octanesolutions.com.auto find out how we can help.

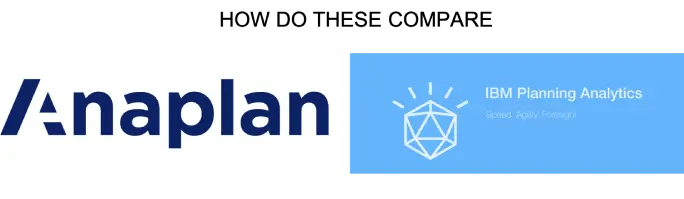

IBM Planning Analytics (TM1) vs Anaplan

There has been a lot of chatter lately around IBM Planning Analytics (powered by TM1) vs Anaplan. Anaplan is a relatively new player in the market and has recently listed on NYSE. Reported Revenue in 2019 of USD 240.6M (interestingly also reported an operating loss of USD 128.3M). Compared to IBM which has a 2018 revenue of USD 79.5 Billion (there is no clear information on how much of this was from the Analytics area) with a net profit of 8.7 b). The size of global Enterprise Performance Management (EPM) is around 3.9 Billion and expected to grow to 6.0Billion by 2022. The size of spreadsheet based processes is a whopping 60 Billion (Source: IDC)

Anaplan has been borne out of the old Adaytum Planning application that was acquired by Cognos and Cognos was acquired by IBM in 2007. Anaplan also spent 176M on Sales and Marketing so most people in the industry would have heard of it or come across some form of its marketing. (Source: Anaplan.com)

I’ve decided to have a closer look at some of the crucial features and functionalities and assess how it really stacks up.

ScalabilityThere are some issues around scaling up the Anaplan cubes where large datasets are under consideration (8 billion cell limit? While this sounds big, most of our clients reach this scale fairly quickly with medium complexity). With IBM Planning Analytics (TM1) there is no need to break up a cube into smaller cubes to meet data limits. Also, there is no demand to combine dimensions to a single dimension. Cubes are generally developed with business requirements in mind and not system limitations. Thereby offering superior degrees of freedom to business analyst.

For example, if enterprise wide reporting was the requirement, then the cubes may be need to be broken via a logical dimension like region of divisions. This in turn would make consolidated reporting laborious, making data slicing and dicing difficult, almost impossible.

Excel Interface & Integration

Love it or hate it – Excel is the tool of choice for most analyst and finance professionals. I reckon it is unwise to offer a BI tool in today’s world without a proper excel integration. I find Planning Analytics (TM1) users love the ability to use excel interface to slice and dice, drill up and down hierarchies and drill to data source. The ability to create interactive excel reports with ability to have cell by cell control of data and formatting is a sure-shot deal clincher.

On the other hand, on exploration realized Anaplan offers very limited Excel support.

Analysis & Reporting

In today’s world users have come to expect drag and drop analysis. Ability to drill down, build and analyze alternate view of the hierarchy etc “real-time”. However, if each of this query requires data to be moved around cubes and/or requires building separate cubes then it’s counterproductive. This would also increase the maintenance and data storage overheads. You also lose sight of single source of truth as your start developing multiple cubes with same data just stored in different form. This is the case with Anaplan due to the software’s intrinsic limitations.

Anaplan also requires users to invest on separate reporting layer as it lacks native reporting, dashboards and data visualizations.

This in turn results in,

- Increase Cost

- Increase Risk

- Increase Complexity

- Limited planning due to data limitations

IBM Planning Analytics, on the contrary offers out of the box ability to view & analyze all your product attributes and the ability to slice and dice via any of the attributes.

It also comes with a rich reporting, dashboard and data visualization layer called Workspace. Planning Analytics Workspace delivers a self-service web authoring to all users. Through the Planning Analytics Workspace interface, authors have access to many visual options designed to help improve financial input templates and reports. Planning Analytics Workspace benefits include:

- Free-form canvas dashboard design

- Data entry and analysis efficiency and convenience features

- Capability to combine cube views, web sheets, text, images, videos, and charts

- Synchronised navigation for guiding consumers through an analytical story

- Browser and mobile operation

- Capability to export to PowerPoint or PDF

Source : Planning Analytics (TM1) cube

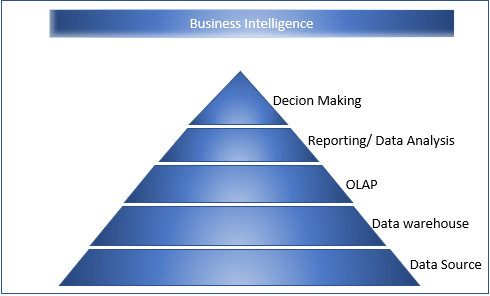

With a variety of business intelligence applications at your disposal, it’s worth investigating the best and comparing your needs against each product to get the best return on your investment.

Here are three ‘must-haves’.

1. Customisable workspace

With a business intelligence application like IBM Planning Analytics, you can enjoy a single and consistent environment in which to manage your KPIs.

So whether you need to measure business performance, evaluate and alter plans, expose gaps or test-drive the impact of potential scenarios on your business activity, you can do so in an interactive, customisable workspace.

2. Predictive analytics

Combining the predictive capabilities of IBM Watson Analytics as well as self-service data discovery, IBM Planning Analytics is a business intelligence tool that injects your performance management with the exceptional capabilities of cognitive computing.

Its natural-language interface makes searches and queries easy, with fast data discovery a given. A range of time-consuming functions are automated – such as data preparation, refinement, management and analysis – so you can focus on what’s most important while always trusting that the data provided is reliable.

3. The capacity to harness both internal and external datasets

Do you have a variety of co-workers who need to leverage insights derived from both internal and external sources on a daily basis – business analysts, line-of-business managers, financial analysts and more?

Then you’ll want a solution that allows users to access business intelligence sources and enterprise resource planning programs to derive valuable information relevant to their ongoing needs. IBM Planning Analytics puts that very information in front of the people who need it most, helping them make faster, smarter decisions that are business critical.

Finding the best business intelligence applications

No matter what industry you’re in, ‘planning’ should be the beating heart of your organisation. And when compliance and consistency are essential to the ongoing success of your operations, you need a business intelligence application that doesn’t compromise on your need for solid analytics functionality.

To learn more about how IBM Planning Analytics could be the business intelligence application for you, contact Octane Software Solutions today.

Despite the rampant growth of new data, constantly evolving technologies and the digitalisation of entire sectors, there are a few facets of business that will never be eliminated entirely: volatility, uncertainty and risk.

But with business intelligence tools, you can keep your organisation on the right track and reduce the inherent risks of day-to-day operations. Here’s how.

A complete, all-in-one business tool

When seeking out a business intelligence tool to adopt in your organisation, you’ll want one with speed, agility and flexibility, so it provides a return on your investment.

With IBM Planning Analytics, for example, you get all those things and more, leading to an all-in-one performance management solution that can be deployed either on-site or in the cloud for operational security.

In addition to its user-friendly and intuitive framework, it provides insights to help both large and small businesses drive greater process efficiency. This is essential in a fast-paced and competitive industry, where the process of analysing data quickly is vital in order to expedite decision-making and take business-critical actions.

The keys to exceptional business intelligence tools

There are plenty of business intelligence tools on the market, but not all are created equal. In order to derive the most value from your tool, you’ll want to ensure it offers:

- Speed and flexibility: You’ll want a tool that allows you to hit the ground running while also leaving room for you to build out your deployment as and when needed.

- Agility: Your business intelligence tool needs to adapt to your changing needs so it can accommodate all your budgets, plans and forecasts even as business conditions fluctuate.

- Foresight: The ability to visualise insights from both internal and external datasets. You can leverage those insights to anticipate future trends and drive your business growth.

Benefits from installing the right tool

Whether you’re a chief data officer, innovation executive or simply a jack-of-all-trades in your organisation, you need to conduct your due diligence when sourcing an appropriate business intelligence tool.

Choose IBM Planning Analytics, for example, and you’ll find it works at an inter-departmental level to streamline time-consuming activities like creating plans, budgets and forecasts in a customisable analytics environment.

Business leaders can engage with fast and flexible ‘what if’ scenario modelling to assist them in important decision-making. And perhaps most important for the wider business team, natural-language searching allows even the most inexperienced users to perform searches and make queries for powerful analysis.

To learn more about how a business intelligence tool like IBM Planning Analytics can take your enterprise to the next level, contact Octane Software Solutions today.

The right business intelligence software can help companies beat out the competition and become market leaders in their industry.

We reveal how to leverage three key benefits of business intelligence software - and overcome some potential drawbacks!

1. Integration across disparate business systems

In order to derive the best strategic insights, you typically need to analyse data from across a number of different systems. Your company’s operational results, for example, require a financial perspective. That means your business intelligence solution should be able to integrate data from various sources to generate answers to your business questions.

2. Historical analysis and reporting

Successful decision-making relies on a comprehensive understanding of how your company has grown in recent years and the reasons behind that growth. Your business intelligence solution must be able to both map and analyse historical data from years in the past. Ensure you choose a tool that can extract, manipulate and parse large amounts of data – in many enterprises, millions of database entries are standard fare.

3. Predictive capabilities

Your solution’s ability to analyse and report historical data means you’ll be able to harness current opportunities, while also predicting your company’s future steps. This power of forecasting should always remain top-of-mind when striving for success.

Overcoming potential challenges

So what about the potential drawbacks? There are a couple of cons to consider, but with the right knowledge you can overcome them to truly realise the power of your business intelligence software.